點擊藍字 ╳ 關注我們

巴延興

深圳開鴻數字產業發展有限公司

資深OS框架開發工程師

一、簡介

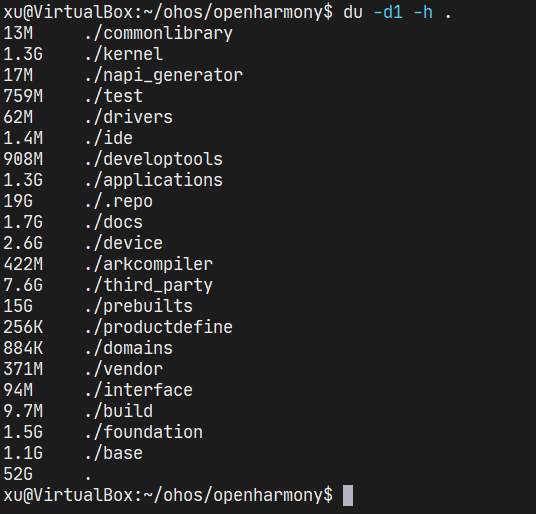

二、目錄

audio_framework

├── frameworks

│ ├── js #js 接口

│ │ └── napi

│ │ └── audio_renderer #audio_renderer NAPI接口

│ │ ├── include

│ │ │ ├── audio_renderer_callback_napi.h

│ │ │ ├── renderer_data_request_callback_napi.h

│ │ │ ├── renderer_period_position_callback_napi.h

│ │ │ └── renderer_position_callback_napi.h

│ │ └── src

│ │ ├── audio_renderer_callback_napi.cpp

│ │ ├── audio_renderer_napi.cpp

│ │ ├── renderer_data_request_callback_napi.cpp

│ │ ├── renderer_period_position_callback_napi.cpp

│ │ └── renderer_position_callback_napi.cpp

│ └── native #native 接口

│ └── audiorenderer

│ ├── BUILD.gn

│ ├── include

│ │ ├── audio_renderer_private.h

│ │ └── audio_renderer_proxy_obj.h

│ ├── src

│ │ ├── audio_renderer.cpp

│ │ └── audio_renderer_proxy_obj.cpp

│ └── test

│ └── example

│ └── audio_renderer_test.cpp

├── interfaces

│ ├── inner_api #native實現的接口

│ │ └── native

│ │ └── audiorenderer #audio渲染本地實現的接口定義

│ │ └── include

│ │ └── audio_renderer.h

│ └── kits #js調用的接口

│ └── js

│ └── audio_renderer #audio渲染NAPI接口的定義

│ └── include

│ └── audio_renderer_napi.h

└── services #服務端

└── audio_service

├── BUILD.gn

├── client #IPC調用中的proxy端

│ ├── include

│ │ ├── audio_manager_proxy.h

│ │ ├── audio_service_client.h

│ └── src

│ ├── audio_manager_proxy.cpp

│ ├── audio_service_client.cpp

└── server #IPC調用中的server端

├── include

│ └── audio_server.h

└── src

├── audio_manager_stub.cpp

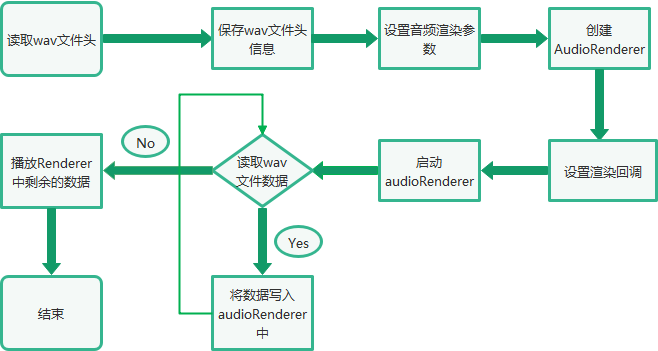

└──audio_server.cpp三、音頻渲染總體流程

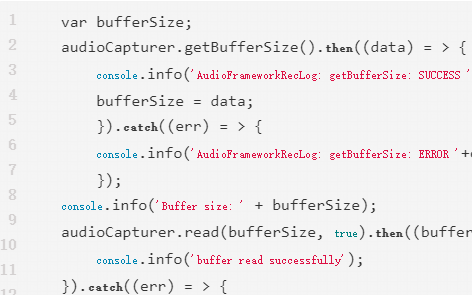

四、Native接口使用

bool TestPlayback(int argc, char *argv[]) const

{

FILE* wavFile = fopen(path, "rb");

//讀取wav文件頭信息

size_t bytesRead = fread(&wavHeader, 1, headerSize, wavFile);

//設置AudioRenderer參數

AudioRendererOptions rendererOptions = {};

rendererOptions.streamInfo.encoding = AudioEncodingType::ENCODING_PCM;

rendererOptions.streamInfo.samplingRate = static_cast(wavHeader.SamplesPerSec);

rendererOptions.streamInfo.format = GetSampleFormat(wavHeader.bitsPerSample);

rendererOptions.streamInfo.channels = static_cast(wavHeader.NumOfChan);

rendererOptions.rendererInfo.contentType = contentType;

rendererOptions.rendererInfo.streamUsage = streamUsage;

rendererOptions.rendererInfo.rendererFlags = 0;

//創建AudioRender實例

unique_ptr audioRenderer = AudioRenderer::Create(rendererOptions);

shared_ptr cb1 = make_shared();

//設置音頻渲染回調

ret = audioRenderer->SetRendererCallback(cb1);

//InitRender方法主要調用了audioRenderer實例的Start方法,啟動音頻渲染

if (!InitRender(audioRenderer)) {

AUDIO_ERR_LOG("AudioRendererTest: Init render failed");

fclose(wavFile);

return false;

}

//StartRender方法主要是讀取wavFile文件的數據,然后通過調用audioRenderer實例的Write方法進行播放

if (!StartRender(audioRenderer, wavFile)) {

AUDIO_ERR_LOG("AudioRendererTest: Start render failed");

fclose(wavFile);

return false;

}

//停止渲染

if (!audioRenderer->Stop()) {

AUDIO_ERR_LOG("AudioRendererTest: Stop failed");

}

//釋放渲染

if (!audioRenderer->Release()) {

AUDIO_ERR_LOG("AudioRendererTest: Release failed");

}

//關閉wavFile

fclose(wavFile);

return true;

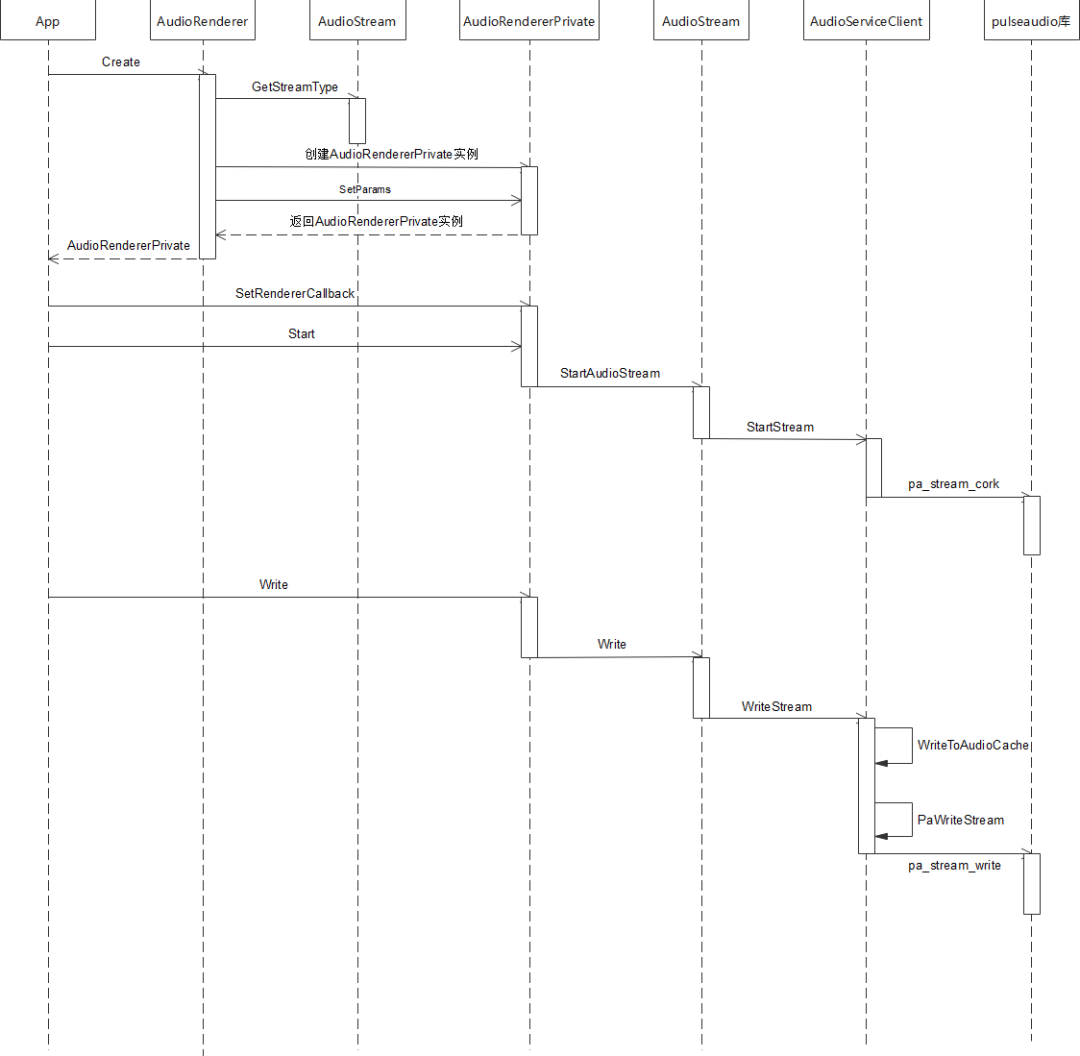

}五、調用流程

std::unique_ptr AudioRenderer::Create(const std::string cachePath,

const AudioRendererOptions &rendererOptions, const AppInfo &appInfo)

{

ContentType contentType = rendererOptions.rendererInfo.contentType;

StreamUsage streamUsage = rendererOptions.rendererInfo.streamUsage;

AudioStreamType audioStreamType = AudioStream::GetStreamType(contentType, streamUsage);

auto audioRenderer = std::make_unique(audioStreamType, appInfo);

if (!cachePath.empty()) {

AUDIO_DEBUG_LOG("Set application cache path");

audioRenderer->SetApplicationCachePath(cachePath);

}

audioRenderer->rendererInfo_.contentType = contentType;

audioRenderer->rendererInfo_.streamUsage = streamUsage;

audioRenderer->rendererInfo_.rendererFlags = rendererOptions.rendererInfo.rendererFlags;

AudioRendererParams params;

params.sampleFormat = rendererOptions.streamInfo.format;

params.sampleRate = rendererOptions.streamInfo.samplingRate;

params.channelCount = rendererOptions.streamInfo.channels;

params.encodingType = rendererOptions.streamInfo.encoding;

if (audioRenderer->SetParams(params) != SUCCESS) {

AUDIO_ERR_LOG("SetParams failed in renderer");

audioRenderer = nullptr;

return nullptr;

}

return audioRenderer;

}int32_t AudioRendererPrivate::SetRendererCallback(const std::shared_ptr &callback)

{

RendererState state = GetStatus();

if (state == RENDERER_NEW || state == RENDERER_RELEASED) {

return ERR_ILLEGAL_STATE;

}

if (callback == nullptr) {

return ERR_INVALID_PARAM;

}

// Save reference for interrupt callback

if (audioInterruptCallback_ == nullptr) {

return ERROR;

}

std::shared_ptr cbInterrupt =

std::static_pointer_cast(audioInterruptCallback_);

cbInterrupt->SaveCallback(callback);

// Save and Set reference for stream callback. Order is important here.

if (audioStreamCallback_ == nullptr) {

audioStreamCallback_ = std::make_shared();

if (audioStreamCallback_ == nullptr) {

return ERROR;

}

}

std::shared_ptr cbStream =

std::static_pointer_cast(audioStreamCallback_);

cbStream->SaveCallback(callback);

(void)audioStream_->SetStreamCallback(audioStreamCallback_);

return SUCCESS;

}bool AudioRendererPrivate::Start(StateChangeCmdType cmdType) const

{

AUDIO_INFO_LOG("AudioRenderer::Start");

RendererState state = GetStatus();

AudioInterrupt audioInterrupt;

switch (mode_) {

case InterruptMode:

audioInterrupt = sharedInterrupt_;

break;

case InterruptMode:

audioInterrupt = audioInterrupt_;

break;

default:

break;

}

AUDIO_INFO_LOG("AudioRenderer: %{public}d, streamType: %{public}d, sessionID: %{public}d",

mode_, audioInterrupt.streamType, audioInterrupt.sessionID);

if (audioInterrupt.streamType == STREAM_DEFAULT || audioInterrupt.sessionID == INVALID_SESSION_ID) {

return false;

}

int32_t ret = AudioPolicyManager::GetInstance().ActivateAudioInterrupt(audioInterrupt);

if (ret != 0) {

AUDIO_ERR_LOG("AudioRendererPrivate::ActivateAudioInterrupt Failed");

return false;

}

return audioStream_->StartAudioStream(cmdType);

}bool AudioStream::StartAudioStream(StateChangeCmdType cmdType)

{

int32_t ret = StartStream(cmdType);

resetTime_ = true;

int32_t retCode = clock_gettime(CLOCK_MONOTONIC, &baseTimestamp_);

if (renderMode_ == RENDER_MODE_CALLBACK) {

isReadyToWrite_ = true;

writeThread_ = std::make_unique<std::thread>(&AudioStream::WriteCbTheadLoop, this);

} else if (captureMode_ == CAPTURE_MODE_CALLBACK) {

isReadyToRead_ = true;

readThread_ = std::make_unique<std::thread>(&AudioStream::ReadCbThreadLoop, this);

}

isFirstRead_ = true;

isFirstWrite_ = true;

state_ = RUNNING;

AUDIO_INFO_LOG("StartAudioStream SUCCESS");

if (audioStreamTracker_) {

AUDIO_DEBUG_LOG("AudioStream:Calling Update tracker for Running");

audioStreamTracker_->UpdateTracker(sessionId_, state_, rendererInfo_, capturerInfo_);

}

return true;

}int32_t AudioServiceClient::StartStream(StateChangeCmdType cmdType)

{

int error;

lock_guard lockdata(dataMutex);

pa_operation *operation = nullptr;

pa_threaded_mainloop_lock(mainLoop);

pa_stream_state_t state = pa_stream_get_state(paStream);

streamCmdStatus = 0;

stateChangeCmdType_ = cmdType;

operation = pa_stream_cork(paStream, 0, PAStreamStartSuccessCb, (void *)this);

while (pa_operation_get_state(operation) == PA_OPERATION_RUNNING) {

pa_threaded_mainloop_wait(mainLoop);

}

pa_operation_unref(operation);

pa_threaded_mainloop_unlock(mainLoop);

if (!streamCmdStatus) {

AUDIO_ERR_LOG("Stream Start Failed");

ResetPAAudioClient();

return AUDIO_CLIENT_START_STREAM_ERR;

} else {

AUDIO_INFO_LOG("Stream Started Successfully");

return AUDIO_CLIENT_SUCCESS;

}

}int32_t AudioRendererPrivate::Write(uint8_t *buffer, size_t bufferSize)

{

return audioStream_->Write(buffer, bufferSize);

}size_t AudioStream::Write(uint8_t *buffer, size_t buffer_size)

{

int32_t writeError;

StreamBuffer stream;

stream.buffer = buffer;

stream.bufferLen = buffer_size;

isWriteInProgress_ = true;

if (isFirstWrite_) {

if (RenderPrebuf(stream.bufferLen)) {

return ERR_WRITE_FAILED;

}

isFirstWrite_ = false;

}

size_t bytesWritten = WriteStream(stream, writeError);

isWriteInProgress_ = false;

if (writeError != 0) {

AUDIO_ERR_LOG("WriteStream fail,writeError:%{public}d", writeError);

return ERR_WRITE_FAILED;

}

return bytesWritten;

}size_t AudioServiceClient::WriteStream(const StreamBuffer &stream, int32_t &pError)

{

size_t cachedLen = WriteToAudioCache(stream);

if (!acache.isFull) {

pError = error;

return cachedLen;

}

pa_threaded_mainloop_lock(mainLoop);

const uint8_t *buffer = acache.buffer.get();

size_t length = acache.totalCacheSize;

error = PaWriteStream(buffer, length);

acache.readIndex += acache.totalCacheSize;

acache.isFull = false;

if (!error && (length >= 0) && !acache.isFull) {

uint8_t *cacheBuffer = acache.buffer.get();

uint32_t offset = acache.readIndex;

uint32_t size = (acache.writeIndex - acache.readIndex);

if (size > 0) {

if (memcpy_s(cacheBuffer, acache.totalCacheSize, cacheBuffer + offset, size)) {

AUDIO_ERR_LOG("Update cache failed");

pa_threaded_mainloop_unlock(mainLoop);

pError = AUDIO_CLIENT_WRITE_STREAM_ERR;

return cachedLen;

}

AUDIO_INFO_LOG("rearranging the audio cache");

}

acache.readIndex = 0;

acache.writeIndex = 0;

if (cachedLen < stream.bufferLen) {

StreamBuffer str;

str.buffer = stream.buffer + cachedLen;

str.bufferLen = stream.bufferLen - cachedLen;

AUDIO_DEBUG_LOG("writing pending data to audio cache: %{public}d", str.bufferLen);

cachedLen += WriteToAudioCache(str);

}

}

pa_threaded_mainloop_unlock(mainLoop);

pError = error;

return cachedLen;

}六、總結

原文標題:OpenHarmony 3.2 Beta Audio——音頻渲染

文章出處:【微信公眾號:OpenAtom OpenHarmony】歡迎添加關注!文章轉載請注明出處。

聲明:本文內容及配圖由入駐作者撰寫或者入駐合作網站授權轉載。文章觀點僅代表作者本人,不代表電子發燒友網立場。文章及其配圖僅供工程師學習之用,如有內容侵權或者其他違規問題,請聯系本站處理。

舉報投訴

-

鴻蒙

+關注

關注

57文章

2311瀏覽量

42747 -

OpenHarmony

+關注

關注

25文章

3661瀏覽量

16159

原文標題:OpenHarmony 3.2 Beta Audio——音頻渲染

文章出處:【微信號:gh_e4f28cfa3159,微信公眾號:OpenAtom OpenHarmony】歡迎添加關注!文章轉載請注明出處。

發布評論請先 登錄

相關推薦

請問cc3200 audio boosterpack音頻采集是不是底噪很大?

基于TLV320AIC3254的音頻開發辦,我燒入wifi_audio_app例程(例程中關掉板載咪頭輸入,并將音量調到最大),另兩入輸入接口沒有接音頻信號,但是板子一直吱吱吱的響,是板子本身底噪就這么大嗎?

發表于 10-25 06:28

TPS6595 Audio Codec輸出音頻偶發混入7Khz雜波是怎么回事?

主芯片是DM3730, 音頻使用的是TPS65950的Audio 外設。

DM3730使用MCBSP輸出8Khz音頻數據,通過I2C設置 TPS65950相關寄存器。 采用Audio

發表于 10-15 07:08

HarmonyOS NEXT Developer Beta1中的Kit

管理服務)、Network Kit(網絡服務)等。

媒體相關Kit開放能力:Audio Kit(音頻服務)、Media Library Kit(媒體文件管理服務)等。

圖形相關Kit開放能力

發表于 06-26 10:47

使用提供的esp_audio_codec 的庫組件時,不能將AAC音頻解碼回PCM音頻,為什么?

使用提供的esp_audio_codec 的庫組件時,能夠將PCM音頻編碼為AAC音頻,但是不能將AAC音頻解碼回PCM音頻,是為什么導致的

發表于 06-05 06:39

【RTC程序設計:實時音視頻權威指南】音頻采集與渲染

在進行視頻的采集與渲染的同時,我們還需要對音頻進行實時的采集和渲染。對于rtc來說,音頻的實時性和流暢性更加重要。

聲音是由于物體在空氣中振動而產生的壓力波,聲波的存在依賴于空氣介質,

發表于 04-28 21:00

【開源鴻蒙】下載OpenHarmony 4.1 Release源代碼

本文介紹了如何下載開源鴻蒙(OpenHarmony)操作系統 4.1 Release版本的源代碼,該方法同樣可以用于下載OpenHarmony最新開發版本(master分支)或者4.0 Release、3.2 Release等發

鴻蒙ArkUI開發學習:【渲染控制語法】

ArkUI開發框架是一套構建 HarmonyOS / OpenHarmony 應用界面的聲明式UI開發框架,它支持程序使用?`if/else`?條件渲染,?`ForEach`?循環渲染以及?`LazyForEach`?懶加載

潤開鴻基于OpenHarmony的全場景應用開發實訓平臺通過兼容性測評

近日,江蘇潤開鴻數字科技有限公司(以下簡稱“潤開鴻”)基于OpenHarmony的全場景應用開發實訓平臺通過OpenHarmony3.2.Release版本兼容性測評,為高校開展

藍牙midi和藍牙音頻或者藍牙audio有什么區別呢

藍牙midi和藍牙音頻或者藍牙audio有什么區別呢

首先這里分為三個概念,也就是什么是藍牙?什么是藍牙midi?什么是藍牙音頻audio?

1、什么是藍牙,這個就不用贅述了,大家

OpenHarmony Sheet 表格渲染引擎

基于 Canvas 實現的高性能 Excel 表格引擎組件 [OpenHarmonySheet]。

由于大部分前端項目渲染層是使用框架根據排版模型樹結構逐層渲染的,整棵渲染樹也是與排版

發表于 01-05 16:32

OpenHarmony開源GPU庫Mesa3D適配說明

本文檔主要講解在OpenHarmony中,Mesa3D的適配方法及原理說明。

環境說明:

OHOS版本: 適用3.2-Beta3及以上

內核版本: linux-5.10

硬件環境

發表于 12-25 11:38

搭載KaihongOS的高動態人形機器人“夸父”通過OpenHarmony 3.2 Release版本兼容性測評

“OpenHarmony”)3.2 Release版本兼容性測評并獲頒兼容性證書 。這體現了深圳開鴻數字產業發展有限公司(以下簡稱”深開鴻“)OpenHarmony生 態建設能力和在新興行業領域

高動態人形機器人“夸父”通過OpenHarmony 3.2 Release版本兼容性測評

近日, 搭載KaihongOS的“夸父”人形機器人通過OpenAtom OpenHarmony(以下簡稱“OpenHarmony”)3.2 Release版本兼容性測評并獲頒兼容性證書 。這體現了

發表于 12-20 09:31

搭載KaihongOS的高動態人形機器人“夸父”通過OpenHarmony3.2 Release版本兼容性測評

近日,搭載KaihongOS的國內首款可跳躍、可適應多地形行走的開源鴻蒙人形機器人通過OpenAtom OpenHarmony(以下簡稱“OpenHarmony”)3.2 Release版本

OpenHarmony 3.2 Beta Audio——音頻渲染

OpenHarmony 3.2 Beta Audio——音頻渲染

評論