一、cephadm介紹

從紅帽ceph5開始使用cephadm代替之前的ceph-ansible作為管理整個集群生命周期的工具,包括部署,管理,監控。

cephadm引導過程在單個節點(bootstrap節點)上創建一個小型存儲集群,包括一個Ceph Monitor和一個Ceph Manager,以及任何所需的依賴項。

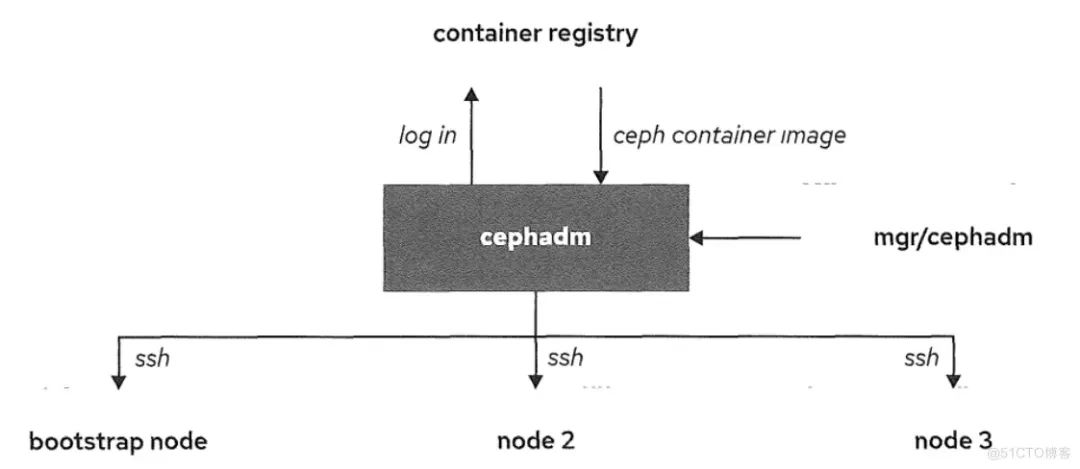

如下圖所示:

cephadm可以登錄到容器倉庫來拉取ceph鏡像和使用對應鏡像來在對應ceph節點進行部署。ceph容器鏡像對于部署ceph集群是必須的,因為被部署的ceph容器是基于那些鏡像。

為了和ceph集群節點通信,cephadm使用ssh。通過使用ssh連接,cephadm可以向集群中添加主機,添加存儲和監控那些主機。

該節點讓集群up的軟件包就是cepadm,podman或docker,python3和chrony。這個容器化的版本減少了ceph集群部署的復雜性和依賴性。

1、python3

yum -y install python3

2、podman或者docker來運行容器

# 安裝阿里云提供的docker-ce yum install -y yum-utils device-mapper-persistent-data lvm2 yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo yum -y install docker-ce systemctl enable docker --now # 配置鏡像加速器 mkdir -p /etc/docker tee /etc/docker/daemon.json <<-'EOF' { "registry-mirrors": ["https://bp1bh1ga.mirror.aliyuncs.com"] } EOF systemctl daemon-reload systemctl restart docker

3、時間同步(比如chrony或者NTP)

二、部署ceph集群前準備

2.1、節點準備

| 節點名稱 | 系統 | IP地址 | ceph角色 | 硬盤 |

| node1 | Rocky Linux release 8.6 | 172.24.1.6 | mon,mgr,服務器端,管理節點 | /dev/vdb,/dev/vdc/,dev/vdd |

| node2 | Rocky Linux release 8.6 | 172.24.1.7 | mon,mgr | /dev/vdb,/dev/vdc/,dev/vdd |

| node3 | Rocky Linux release 8.6 | 172.24.1.8 | mon,mgr | /dev/vdb,/dev/vdc/,dev/vdd |

| node4 | Rocky Linux release 8.6 | 172.24.1.9 | 客戶端,管理節點 |

2.2、修改每個節點的/etc/host

172.24.1.6 node1 172.24.1.7 node2 172.24.1.8 node3 172.24.1.9 node4

2.3、在node1節點上做免密登錄

[root@node1 ~]# ssh-keygen [root@node1 ~]# ssh-copy-id root@node2 [root@node1 ~]# ssh-copy-id root@node3 [root@node1 ~]# ssh-copy-id root@node4

三、node1節點安裝cephadm

1.安裝epel源 [root@node1 ~]# yum -y install epel-release 2.安裝ceph源 [root@node1 ~]# yum search release-ceph 上次元數據過期檢查:014 前,執行于 2023年02月14日 星期二 14時22分00秒。 ================= 名稱 匹配:release-ceph ============================================ centos-release-ceph-nautilus.noarch : Ceph Nautilus packages from the CentOS Storage SIG repository centos-release-ceph-octopus.noarch : Ceph Octopus packages from the CentOS Storage SIG repository centos-release-ceph-pacific.noarch : Ceph Pacific packages from the CentOS Storage SIG repository centos-release-ceph-quincy.noarch : Ceph Quincy packages from the CentOS Storage SIG repository [root@node1 ~]# yum -y install centos-release-ceph-pacific.noarch 3.安裝cephadm [root@node1 ~]# yum -y install cephadm 4.安裝ceph-common [root@node1 ~]# yum -y install ceph-common

四、其它節點安裝docker-ce,python3

具體過程看標題一。

五、部署ceph集群

5.1、部署ceph集群,順便把dashboard(圖形控制界面)安裝上

[root@node1 ~]# cephadm bootstrap --mon-ip 172.24.1.6 --allow-fqdn-hostname --initial-dashboard-user admin --initial-dashboard-password redhat --dashboard-password-noupdate Verifying podman|docker is present... Verifying lvm2 is present... Verifying time synchronization is in place... Unit chronyd.service is enabled and running Repeating the final host check... docker (/usr/bin/docker) is present systemctl is present lvcreate is present Unit chronyd.service is enabled and running Host looks OK Cluster fsid: 0b565668-ace4-11ed-960c-5254000de7a0 Verifying IP 172.24.1.6 port 3300 ... Verifying IP 172.24.1.6 port 6789 ... Mon IP `172.24.1.6` is in CIDR network `172.24.1.0/24` - internal network (--cluster-network) has not been provided, OSD replication will default to the public_network Pulling container image quay.io/ceph/ceph:v16... Ceph version: ceph version 16.2.11 (3cf40e2dca667f68c6ce3ff5cd94f01e711af894) pacific (stable) Extracting ceph user uid/gid from container image... Creating initial keys... Creating initial monmap... Creating mon... Waiting for mon to start... Waiting for mon... mon is available Assimilating anything we can from ceph.conf... Generating new minimal ceph.conf... Restarting the monitor... Setting mon public_network to 172.24.1.0/24 Wrote config to /etc/ceph/ceph.conf Wrote keyring to /etc/ceph/ceph.client.admin.keyring Creating mgr... Verifying port 9283 ... Waiting for mgr to start... Waiting for mgr... mgr not available, waiting (1/15)... mgr not available, waiting (2/15)... mgr not available, waiting (3/15)... mgr is available Enabling cephadm module... Waiting for the mgr to restart... Waiting for mgr epoch 5... mgr epoch 5 is available Setting orchestrator backend to cephadm... Generating ssh key... Wrote public SSH key to /etc/ceph/ceph.pub Adding key to root@localhost authorized_keys... Adding host node1... Deploying mon service with default placement... Deploying mgr service with default placement... Deploying crash service with default placement... Deploying prometheus service with default placement... Deploying grafana service with default placement... Deploying node-exporter service with default placement... Deploying alertmanager service with default placement... Enabling the dashboard module... Waiting for the mgr to restart... Waiting for mgr epoch 9... mgr epoch 9 is available Generating a dashboard self-signed certificate... Creating initial admin user... Fetching dashboard port number... Ceph Dashboard is now available at: URL: https://node1.domain1.example.com:8443/ User: admin Password: redhat Enabling client.admin keyring and conf on hosts with "admin" label You can access the Ceph CLI with: sudo /usr/sbin/cephadm shell --fsid 0b565668-ace4-11ed-960c-5254000de7a0 -c /etc/ceph/ceph.conf -k /etc/ceph/ceph.client.admin.keyring Please consider enabling telemetry to help improve Ceph: ceph telemetry on For more information see: https://docs.ceph.com/docs/pacific/mgr/telemetry/ Bootstrap complete.

5.2、把集群公鑰復制到將成為集群成員的節點

[root@node1 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub root@node2 [root@node1 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub root@node3 [root@node1 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub root@node4

5.3、添加節點node2,node3,node4(各節點要先安裝docker-ce,python3)

[root@node1 ~]# ceph orch host add node2 172.24.1.7 Added host 'node2' with addr '172.24.1.7' [root@node1 ~]# ceph orch host add node3 172.24.1.8 Added host 'node3' with addr '172.24.1.8' [root@node1 ~]# ceph orch host add node4 172.24.1.9 Added host 'node4' with addr '172.24.1.9'

5.4、給node1、node4打上管理員標簽,拷貝ceph配置文件和keyring到node4

[root@node1 ~]# ceph orch host label add node1 _admin

Added label _admin to host node1

[root@node1 ~]# ceph orch host label add node4 _admin

Added label _admin to host node4

[root@node1 ~]# scp /etc/ceph/{*.conf,*.keyring} root@node4:/etc/ceph

[root@node1 ~]# ceph orch host ls

HOST ADDR LABELS STATUS

node1 172.24.1.6 _admin

node2 172.24.1.7

node3 172.24.1.8

node4 172.24.1.9 _admin

5.5、添加mon

[root@node1 ~]# ceph orch apply mon "node1,node2,node3" Scheduled mon update...

5.6、添加mgr

[root@node1 ~]# ceph orch apply mgr --placement="node1,node2,node3" Scheduled mgr update...

5.7、添加osd

[root@node1 ~]# ceph orch daemon add osd node1:/dev/vdb [root@node1 ~]# ceph orch daemon add osd node1:/dev/vdc [root@node1 ~]# ceph orch daemon add osd node1:/dev/vdd [root@node1 ~]# ceph orch daemon add osd node2:/dev/vdb [root@node1 ~]# ceph orch daemon add osd node2:/dev/vdc [root@node1 ~]# ceph orch daemon add osd node2:/dev/vdd [root@node1 ~]# ceph orch daemon add osd node3:/dev/vdb [root@node1 ~]# ceph orch daemon add osd node3:/dev/vdc [root@node1 ~]# ceph orch daemon add osd node3:/dev/vdd 或者: [root@node1 ~]# for i in node1 node2 node3; do for j in vdb vdc vdd; do ceph orch daemon add osd $i:/dev/$j; done; done Created osd(s) 0 on host 'node1' Created osd(s) 1 on host 'node1' Created osd(s) 2 on host 'node1' Created osd(s) 3 on host 'node2' Created osd(s) 4 on host 'node2' Created osd(s) 5 on host 'node2' Created osd(s) 6 on host 'node3' Created osd(s) 7 on host 'node3' Created osd(s) 8 on host 'node3' [root@node1 ~]# ceph orch device ls HOST PATH TYPE DEVICE ID SIZE AVAILABLE REFRESHED REJECT REASONS node1 /dev/vdb hdd 10.7G 4m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node1 /dev/vdc hdd 10.7G 4m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node1 /dev/vdd hdd 10.7G 4m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node2 /dev/vdb hdd 10.7G 3m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node2 /dev/vdc hdd 10.7G 3m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node2 /dev/vdd hdd 10.7G 3m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node3 /dev/vdb hdd 10.7G 90s ago Insufficient space (<10 extents) on vgs, LVM detected, locked node3 /dev/vdc hdd 10.7G 90s ago Insufficient space (<10 extents) on vgs, LVM detected, locked node3 /dev/vdd hdd 10.7G 90s ago Insufficient space (<10 extents) on vgs, LVM detected, locked

5.8、至此,ceph集群部署完畢!

[root@node1 ~]# ceph -s

cluster:

id: 0b565668-ace4-11ed-960c-5254000de7a0

health: HEALTH_OK

services:

mon: 3 daemons, quorum node1,node2,node3 (age 7m)

mgr: node1.cxtokn(active, since 14m), standbys: node2.heebcb, node3.fsrlxu

osd: 9 osds: 9 up (since 59s), 9 in (since 81s)

data:

pools: 1 pools, 1 pgs

objects: 0 objects, 0 B

usage: 53 MiB used, 90 GiB / 90 GiB avail

pgs: 1 active+clean

5.9、node4節點管理ceph

# 在目錄5.4已經將ceph配置文件和keyring拷貝到node4節點

[root@node4 ~]# ceph -s

-bash: ceph: 未找到命令,需要安裝ceph-common

# 安裝ceph源

[root@node4 ~]# yum -y install centos-release-ceph-pacific.noarch

# 安裝ceph-common

[root@node4 ~]# yum -y install ceph-common

[root@node4 ~]# ceph -s

cluster:

id: 0b565668-ace4-11ed-960c-5254000de7a0

health: HEALTH_OK

services:

mon: 3 daemons, quorum node1,node2,node3 (age 7m)

mgr: node1.cxtokn(active, since 14m), standbys: node2.heebcb, node3.fsrlxu

osd: 9 osds: 9 up (since 59s), 9 in (since 81s)

data:

pools: 1 pools, 1 pgs

objects: 0 objects, 0 B

usage: 53 MiB used, 90 GiB / 90 GiB avail

pgs: 1 active+clean

審核編輯:劉清

-

NTP

+關注

關注

1文章

157瀏覽量

13881 -

python

+關注

關注

56文章

4782瀏覽量

84449

原文標題:使用cephadm部署ceph集群

文章出處:【微信號:magedu-Linux,微信公眾號:馬哥Linux運維】歡迎添加關注!文章轉載請注明出處。

發布評論請先 登錄

相關推薦

Hadoop的集群環境部署說明

基于全HDD aarch64服務器的Ceph性能調優實踐總結

Ceph是什么?Ceph的統一存儲方案簡析

如何部署基于Mesos的Kubernetes集群

請問怎樣使用cephadm部署ceph集群呢?

請問怎樣使用cephadm部署ceph集群呢?

評論