由于內核的workqueue變遷一直在發生,而一般的內核書又比較老,跟不上時代。

Workqueue 是內核里面很重要的一個機制,特別是內核驅動,一般的小型任務 (work) 都不會自己起一個線程來處理,而是扔到 Workqueue 中處理。Workqueue 的主要工作就是用進程上下文來處理內核中大量的小任務。

本文的代碼分析基于 Linux kernel 3.18.22,最好的學習方法還是 “read the fucking source code”

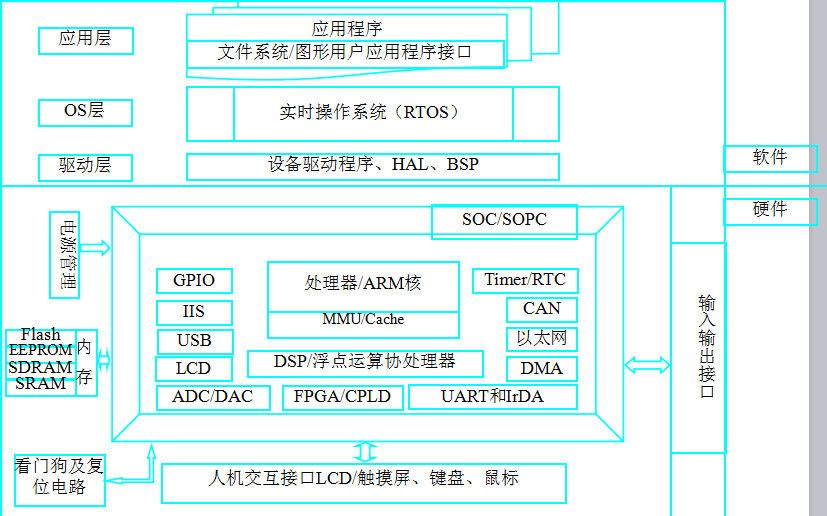

1.CMWQ 的幾個基本概念

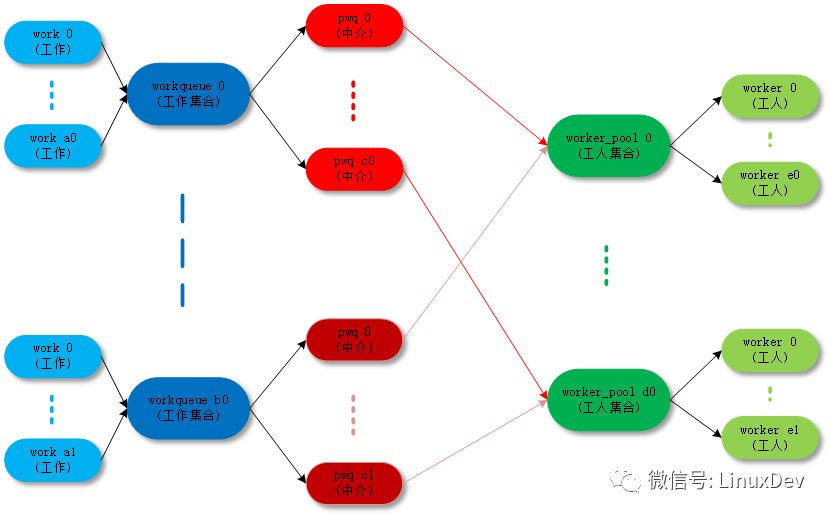

關于 workqueue 中幾個概念都是 work 相關的數據結構非常容易混淆,大概可以這樣來理解:

work :工作。

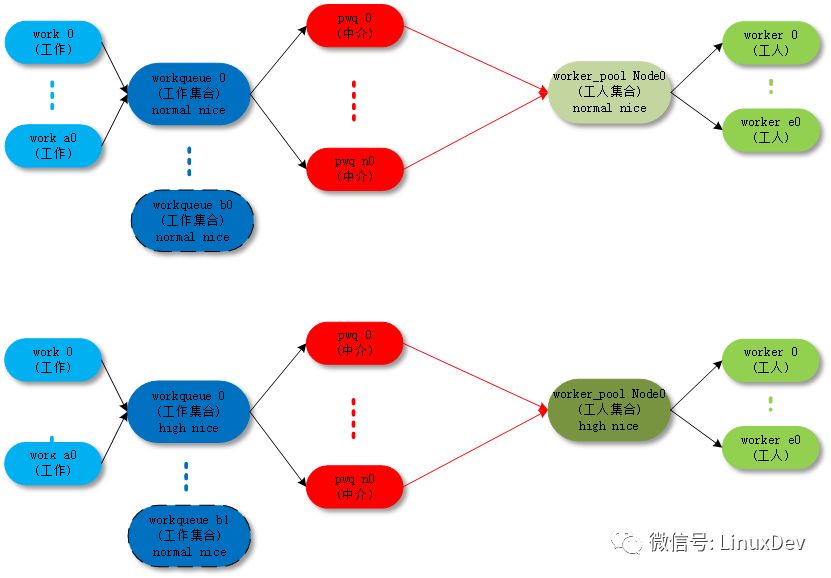

workqueue :工作的集合。workqueue 和 work 是一對多的關系。

worker :工人。在代碼中 worker 對應一個work_thread()內核線程。

worker_pool:工人的集合。worker_pool 和 worker 是一對多的關系。

pwq(pool_workqueue):中間人 / 中介,負責建立起 workqueue 和 worker_pool 之間的關系。workqueue 和 pwq 是一對多的關系,pwq 和 worker_pool 是一對一的關系。

最終的目的還是把 work( 工作 ) 傳遞給 worker( 工人 ) 去執行,中間的數據結構和各種關系目的是把這件事組織的更加清晰高效。

1.1 worker_pool

每個執行 work 的線程叫做 worker,一組 worker 的集合叫做 worker_pool。CMWQ 的精髓就在 worker_pool 里面 worker 的動態增減管理上manage_workers()。

CMWQ 對 worker_pool 分成兩類:

normal worker_pool,給通用的 workqueue 使用;

unbound worker_pool,給 WQ_UNBOUND 類型的的 workqueue 使用;

1.1.1 normal worker_pool

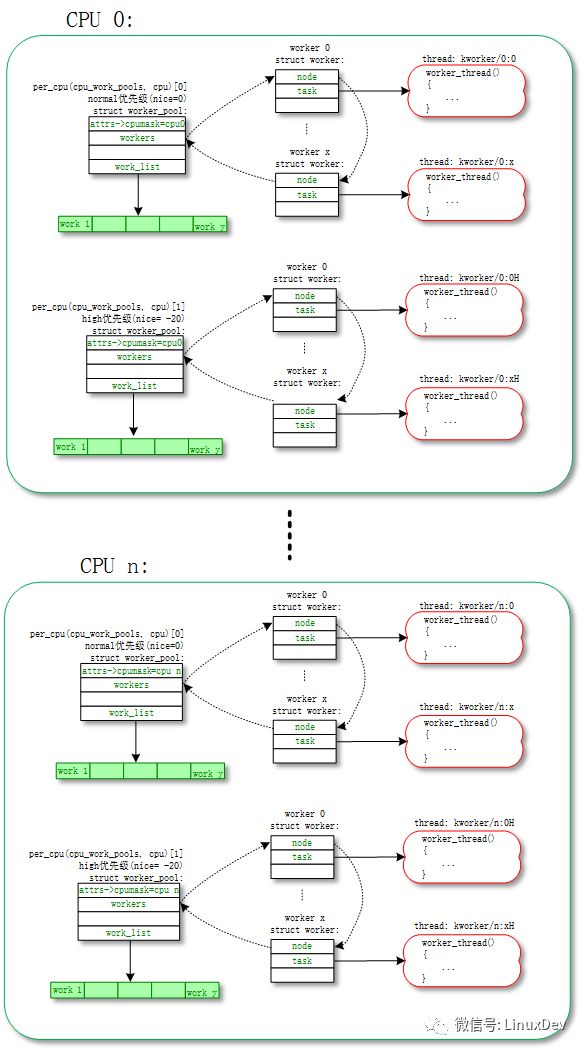

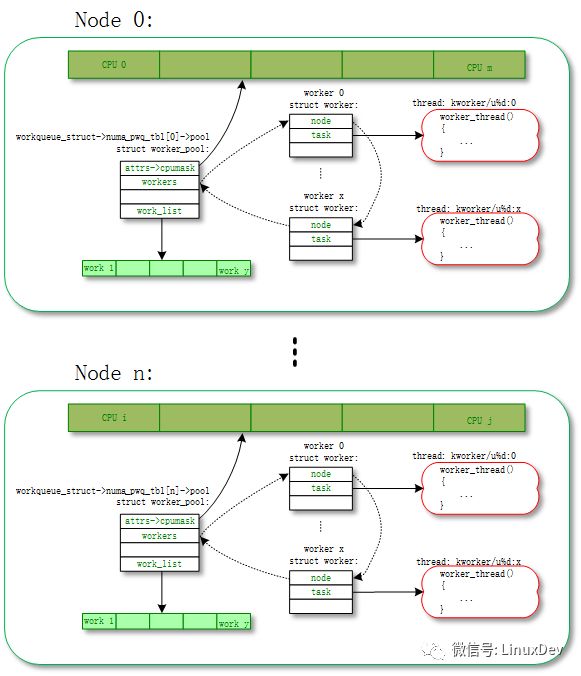

默認 work 是在 normal worker_pool 中處理的。系統的規劃是每個 CPU 創建兩個 normal worker_pool:一個 normal 優先級 (nice=0)、一個高優先級 (nice=HIGHPRI_NICE_LEVEL),對應創建出來的 worker 的進程 nice 不一樣。

每個 worker 對應一個worker_thread()內核線程,一個 worker_pool 包含一個或者多個 worker,worker_pool 中 worker 的數量是根據 worker_pool 中 work 的負載來動態增減的。

我們可以通過ps | grep kworker命令來查看所有 worker 對應的內核線程,normal worker_pool 對應內核線程 (worker_thread()) 的命名規則是這樣的:

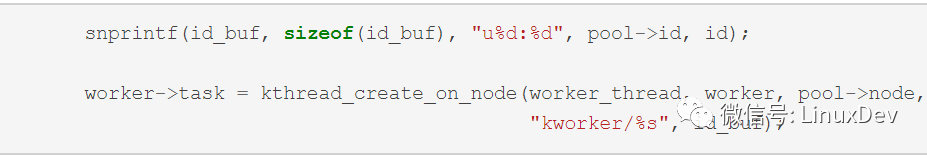

snprintf(id_buf, sizeof(id_buf), "%d:%d%s", pool->cpu, id, pool->attrs->nice < 0 ?? "H" : "");worker->task = kthread_create_on_node(worker_thread, worker, pool->node, "kworker/%s", id_buf);

so 類似名字是 normal worker_pool:

shell@PRO5:/ $ ps | grep "kworker"root 14 2 0 0 worker_thr 0000000000 S kworker/1:0H// cpu1 高優先級 worker_pool 的第 0 個 worker 進程root 17 2 0 0 worker_thr 0000000000 S kworker/2:0// cpu2 低優先級 worker_pool 的第 0 個 worker 進程root 18 2 0 0 worker_thr 0000000000 S kworker/2:0H// cpu2 高優先級 worker_pool 的第 0 個 worker 進程root 23699 2 0 0 worker_thr 0000000000 S kworker/0:1// cpu0 低優先級 worker_pool 的第 1 個 worker 進程

對應的拓撲圖如下:

以下是 normal worker_pool 詳細的創建過程代碼分析:

kernel/workqueue.c:

init_workqueues()->init_worker_pool()/create_worker()

static int __init init_workqueues(void)

{

int std_nice[NR_STD_WORKER_POOLS] = { 0, HIGHPRI_NICE_LEVEL };

int i, cpu;

// (1) 給每個 cpu 創建對應的 worker_pool

/* initialize CPU pools */

for_each_possible_cpu(cpu) {

struct worker_pool *pool;

i = 0;

for_each_cpu_worker_pool(pool, cpu) {

BUG_ON(init_worker_pool(pool));

// 指定 cpu

pool->cpu = cpu;

cpumask_copy(pool->attrs->cpumask, cpumask_of(cpu));

// 指定進程優先級 nice

pool->attrs->nice = std_nice[i++];

pool->node = cpu_to_node(cpu);

/* alloc pool ID */

mutex_lock(&wq_pool_mutex);

BUG_ON(worker_pool_assign_id(pool));

mutex_unlock(&wq_pool_mutex);

}

}

// (2) 給每個 worker_pool 創建第一個 worker

/* create the initial worker */

for_each_online_cpu(cpu) {

struct worker_pool *pool;

for_each_cpu_worker_pool(pool, cpu) {

pool->flags &= ~POOL_DISASSOCIATED;

BUG_ON(!create_worker(pool));

}

}

}

| →

static int init_worker_pool(struct worker_pool *pool)

{

spin_lock_init(&pool->lock);

pool->id = -1;

pool->cpu = -1;

pool->node = NUMA_NO_NODE;

pool->flags |= POOL_DISASSOCIATED;

// (1.1) worker_pool 的 work list,各個 workqueue 把 work 掛載到這個鏈表上,

// 讓 worker_pool 對應的多個 worker 來執行

INIT_LIST_HEAD(&pool->worklist);

// (1.2) worker_pool 的 idle worker list,

// worker 沒有活干時,不會馬上銷毀,先進入 idle 狀態備選

INIT_LIST_HEAD(&pool->idle_list);

// (1.3) worker_pool 的 busy worker list,

// worker 正在干活,在執行 work

hash_init(pool->busy_hash);

// (1.4) 檢查 idle 狀態 worker 是否需要 destroy 的 timer

init_timer_deferrable(&pool->idle_timer);

pool->idle_timer.function = idle_worker_timeout;

pool->idle_timer.data = (unsigned long)pool;

// (1.5) 在 worker_pool 創建新的 worker 時,檢查是否超時的 timer

setup_timer(&pool->mayday_timer, pool_mayday_timeout,

(unsigned long)pool);

mutex_init(&pool->manager_arb);

mutex_init(&pool->attach_mutex);

INIT_LIST_HEAD(&pool->workers);

ida_init(&pool->worker_ida);

INIT_HLIST_NODE(&pool->hash_node);

pool->refcnt = 1;

/* shouldn't fail above this point */

pool->attrs = alloc_workqueue_attrs(GFP_KERNEL);

if (!pool->attrs)

return -ENOMEM;

return 0;

}

| →

static struct worker *create_worker(struct worker_pool *pool)

{

struct worker *worker = NULL;

int id = -1;

char id_buf[16];

/* ID is needed to determine kthread name */

id = ida_simple_get(&pool->worker_ida, 0, 0, GFP_KERNEL);

if (id < 0)

goto fail;

worker = alloc_worker(pool->node);

if (!worker)

goto fail;

worker->pool = pool;

worker->id = id;

if (pool->cpu >= 0)

// (2.1) 給 normal worker_pool 的 worker 構造進程名

snprintf(id_buf, sizeof(id_buf), "%d:%d%s", pool->cpu, id,

pool->attrs->nice < 0? ? "H" : "");

else

// (2.2) 給 unbound worker_pool 的 worker 構造進程名

snprintf(id_buf, sizeof(id_buf), "u%d:%d", pool->id, id);

// (2.3) 創建 worker 對應的內核進程

worker->task = kthread_create_on_node(worker_thread, worker, pool->node,

"kworker/%s", id_buf);

if (IS_ERR(worker->task))

goto fail;

// (2.4) 設置內核進程對應的優先級 nice

set_user_nice(worker->task, pool->attrs->nice);

/* prevent userland from meddling with cpumask of workqueue workers */

worker->task->flags |= PF_NO_SETAFFINITY;

// (2.5) 將 worker 和 worker_pool 綁定

/* successful, attach the worker to the pool */

worker_attach_to_pool(worker, pool);

// (2.6) 將 worker 初始狀態設置成 idle,

// wake_up_process 以后,worker 自動 leave idle 狀態

/* start the newly created worker */

spin_lock_irq(&pool->lock);

worker->pool->nr_workers++;

worker_enter_idle(worker);

wake_up_process(worker->task);

spin_unlock_irq(&pool->lock);

return worker;

fail:

if (id >= 0)

ida_simple_remove(&pool->worker_ida, id);

kfree(worker);

return NULL;

}

|| →

static void worker_attach_to_pool(struct worker *worker,

struct worker_pool *pool)

{

mutex_lock(&pool->attach_mutex);

// (2.5.1) 將 worker 線程和 cpu 綁定

/*

* set_cpus_allowed_ptr() will fail if the cpumask doesn't have any

* online CPUs. It'll be re-applied when any of the CPUs come up.

*/

set_cpus_allowed_ptr(worker->task, pool->attrs->cpumask);

/*

* The pool->attach_mutex ensures %POOL_DISASSOCIATED remains

* stable across this function. See the comments above the

* flag definition for details.

*/

if (pool->flags & POOL_DISASSOCIATED)

worker->flags |= WORKER_UNBOUND;

// (2.5.2) 將 worker 加入 worker_pool 鏈表

list_add_tail(&worker->node, &pool->workers);

mutex_unlock(&pool->attach_mutex);

}

1.1.2 unbound worker_pool

大部分的 work 都是通過 normal worker_pool 來執行的 ( 例如通過schedule_work()、schedule_work_on()壓入到系統 workqueue(system_wq) 中的 work),最后都是通過 normal worker_pool 中的 worker 來執行的。這些 worker 是和某個 CPU 綁定的,work 一旦被 worker 開始執行,都是一直運行到某個 CPU 上的不會切換 CPU。

unbound worker_pool 相對應的意思,就是 worker 可以在多個 CPU 上調度的。但是他其實也是綁定的,只不過它綁定的單位不是 CPU 而是 node。所謂的 node 是對 NUMA(Non Uniform Memory Access Architecture) 系統來說的,NUMA 可能存在多個 node,每個 node 可能包含一個或者多個 CPU。

unbound worker_pool 對應內核線程 (worker_thread()) 的命名規則是這樣的:

so 類似名字是 unbound worker_pool:

shell@PRO5:/ $ ps | grep "kworker"

root 23906 2 0 0 worker_thr 0000000000 S kworker/u20:2// unbound pool 20 的第 2 個 worker 進程

root 24564 2 0 0 worker_thr 0000000000 S kworker/u20:0// unbound pool 20 的第 0 個 worker 進程

root 24622 2 0 0 worker_thr 0000000000 S kworker/u21:1// unbound pool 21 的第 1 個 worker 進程

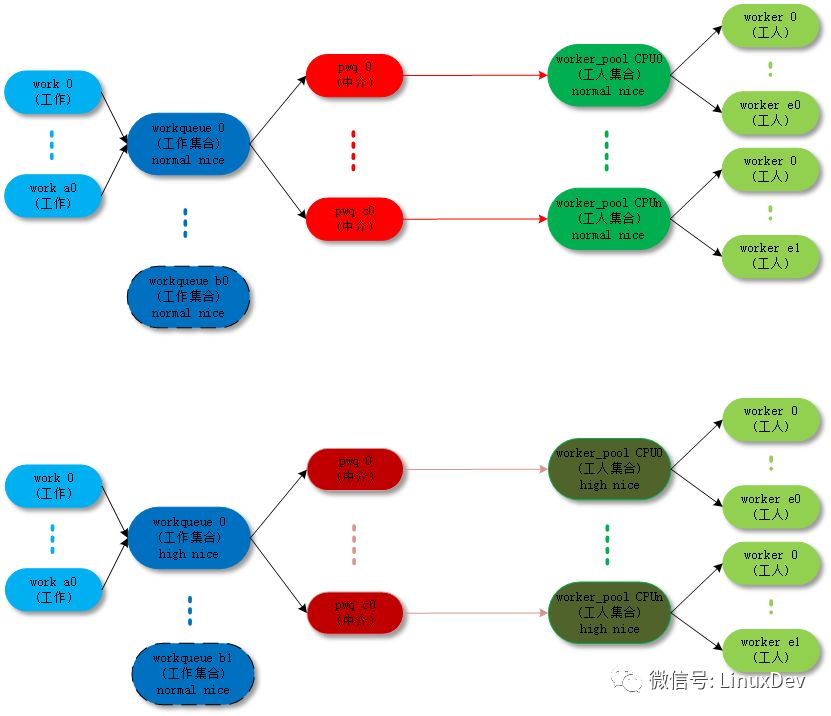

unbound worker_pool 也分成兩類:

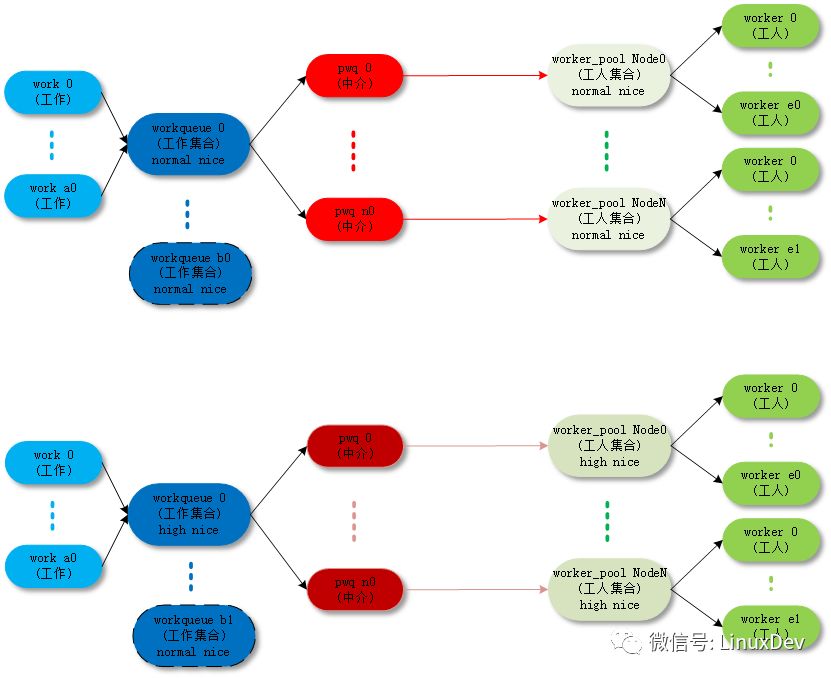

unbound_std_wq。每個 node 對應一個 worker_pool,多個 node 就對應多個 worker_pool;

對應的拓撲圖如下:

unbound_std_wq topology

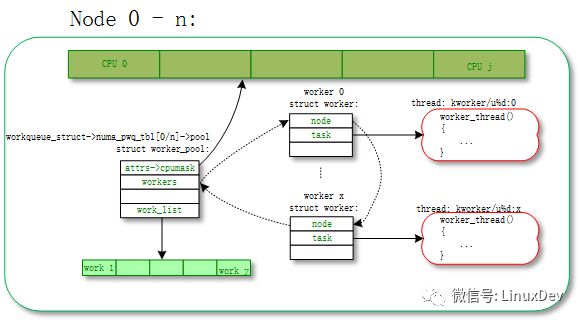

ordered_wq。所有 node 對應一個 default worker_pool;

對應的拓撲圖如下:

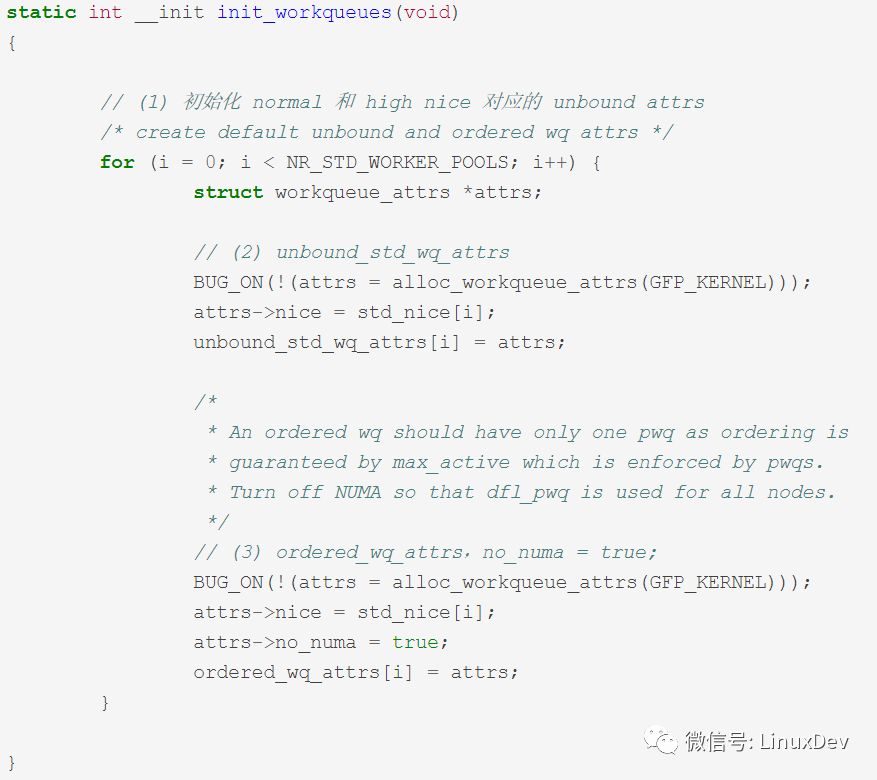

以下是 unbound worker_pool 詳細的創建過程代碼分析:

kernel/workqueue.c:

init_workqueues()-> unbound_std_wq_attrs/ordered_wq_attrs

kernel/workqueue.c:

__alloc_workqueue_key()->alloc_and_link_pwqs()->apply_workqueue_attrs()->alloc_unbound_pwq()/numa_pwq_tbl_install()

struct workqueue_struct *__alloc_workqueue_key(const char *fmt,

unsigned int flags,

int max_active,

struct lock_class_key *key,

const char *lock_name, ...)

{

size_t tbl_size = 0;

va_list args;

struct workqueue_struct *wq;

struct pool_workqueue *pwq;

/* see the comment above the definition of WQ_POWER_EFFICIENT */

if ((flags & WQ_POWER_EFFICIENT) && wq_power_efficient)

flags |= WQ_UNBOUND;

/* allocate wq and format name */

if (flags & WQ_UNBOUND)

tbl_size = nr_node_ids * sizeof(wq->numa_pwq_tbl[0]);

// (1) 分配 workqueue_struct 數據結構

wq = kzalloc(sizeof(*wq) + tbl_size, GFP_KERNEL);

if (!wq)

return NULL;

if (flags & WQ_UNBOUND) {

wq->unbound_attrs = alloc_workqueue_attrs(GFP_KERNEL);

if (!wq->unbound_attrs)

goto err_free_wq;

}

va_start(args, lock_name);

vsnprintf(wq->name, sizeof(wq->name), fmt, args);

va_end(args);

// (2) pwq 最多放到 worker_pool 中的 work 數

max_active = max_active ?: WQ_DFL_ACTIVE;

max_active = wq_clamp_max_active(max_active, flags, wq->name);

/* init wq */

wq->flags = flags;

wq->saved_max_active = max_active;

mutex_init(&wq->mutex);

atomic_set(&wq->nr_pwqs_to_flush, 0);

INIT_LIST_HEAD(&wq->pwqs);

INIT_LIST_HEAD(&wq->flusher_queue);

INIT_LIST_HEAD(&wq->flusher_overflow);

INIT_LIST_HEAD(&wq->maydays);

lockdep_init_map(&wq->lockdep_map, lock_name, key, 0);

INIT_LIST_HEAD(&wq->list);

// (3) 給 workqueue 分配對應的 pool_workqueue

// pool_workqueue 將 workqueue 和 worker_pool 鏈接起來

if (alloc_and_link_pwqs(wq) < 0)

goto err_free_wq;

// (4) 如果是 WQ_MEM_RECLAIM 類型的 workqueue

// 創建對應的 rescuer_thread() 內核進程

/*

* Workqueues which may be used during memory reclaim should

* have a rescuer to guarantee forward progress.

*/

if (flags & WQ_MEM_RECLAIM) {

struct worker *rescuer;

rescuer = alloc_worker(NUMA_NO_NODE);

if (!rescuer)

goto err_destroy;

rescuer->rescue_wq = wq;

rescuer->task = kthread_create(rescuer_thread, rescuer, "%s",

wq->name);

if (IS_ERR(rescuer->task)) {

kfree(rescuer);

goto err_destroy;

}

wq->rescuer = rescuer;

rescuer->task->flags |= PF_NO_SETAFFINITY;

wake_up_process(rescuer->task);

}

// (5) 如果是需要,創建 workqueue 對應的 sysfs 文件

if ((wq->flags & WQ_SYSFS) && workqueue_sysfs_register(wq))

goto err_destroy;

/*

* wq_pool_mutex protects global freeze state and workqueues list.

* Grab it, adjust max_active and add the new @wq to workqueues

* list.

*/

mutex_lock(&wq_pool_mutex);

mutex_lock(&wq->mutex);

for_each_pwq(pwq, wq)

pwq_adjust_max_active(pwq);

mutex_unlock(&wq->mutex);

// (6) 將新的 workqueue 加入到全局鏈表 workqueues 中

list_add(&wq->list, &workqueues);

mutex_unlock(&wq_pool_mutex);

return wq;

err_free_wq:

free_workqueue_attrs(wq->unbound_attrs);

kfree(wq);

return NULL;

err_destroy:

destroy_workqueue(wq);

return NULL;

}

| →

static int alloc_and_link_pwqs(struct workqueue_struct *wq)

{

bool highpri = wq->flags & WQ_HIGHPRI;

int cpu, ret;

// (3.1) normal workqueue

// pool_workqueue 鏈接 workqueue 和 worker_pool 的過程

if (!(wq->flags & WQ_UNBOUND)) {

// 給 workqueue 的每個 cpu 分配對應的 pool_workqueue,賦值給 wq->cpu_pwqs

wq->cpu_pwqs = alloc_percpu(struct pool_workqueue);

if (!wq->cpu_pwqs)

return -ENOMEM;

for_each_possible_cpu(cpu) {

struct pool_workqueue *pwq =

per_cpu_ptr(wq->cpu_pwqs, cpu);

struct worker_pool *cpu_pools =

per_cpu(cpu_worker_pools, cpu);

// 將初始化時已經創建好的 normal worker_pool,賦值給 pool_workqueue

init_pwq(pwq, wq, &cpu_pools[highpri]);

mutex_lock(&wq->mutex);

// 將 pool_workqueue 和 workqueue 鏈接起來

link_pwq(pwq);

mutex_unlock(&wq->mutex);

}

return 0;

} else if (wq->flags & __WQ_ORDERED) {

// (3.2) unbound ordered_wq workqueue

// pool_workqueue 鏈接 workqueue 和 worker_pool 的過程

ret = apply_workqueue_attrs(wq, ordered_wq_attrs[highpri]);

/* there should only be single pwq for ordering guarantee */

WARN(!ret && (wq->pwqs.next != &wq->dfl_pwq->pwqs_node ||

wq->pwqs.prev != &wq->dfl_pwq->pwqs_node),

"ordering guarantee broken for workqueue %s\n", wq->name);

return ret;

} else {

// (3.3) unbound unbound_std_wq workqueue

// pool_workqueue 鏈接 workqueue 和 worker_pool 的過程

return apply_workqueue_attrs(wq, unbound_std_wq_attrs[highpri]);

}

}

|| →

int apply_workqueue_attrs(struct workqueue_struct *wq,

const struct workqueue_attrs *attrs)

{

// (3.2.1) 根據的 ubound 的 ordered_wq_attrs/unbound_std_wq_attrs

// 創建對應的 pool_workqueue 和 worker_pool

// 其中 worker_pool 不是默認創建好的,是需要動態創建的,對應的 worker 內核進程也要重新創建

// 創建好的 pool_workqueue 賦值給 pwq_tbl[node]

/*

* If something goes wrong during CPU up/down, we'll fall back to

* the default pwq covering whole @attrs->cpumask. Always create

* it even if we don't use it immediately.

*/

dfl_pwq = alloc_unbound_pwq(wq, new_attrs);

if (!dfl_pwq)

goto enomem_pwq;

for_each_node(node) {

if (wq_calc_node_cpumask(attrs, node, -1, tmp_attrs->cpumask)) {

pwq_tbl[node] = alloc_unbound_pwq(wq, tmp_attrs);

if (!pwq_tbl[node])

goto enomem_pwq;

} else {

dfl_pwq->refcnt++;

pwq_tbl[node] = dfl_pwq;

}

}

/* save the previous pwq and install the new one */

// (3.2.2) 將臨時 pwq_tbl[node] 賦值給 wq->numa_pwq_tbl[node]

for_each_node(node)

pwq_tbl[node] = numa_pwq_tbl_install(wq, node, pwq_tbl[node]);

}

||| →

static struct pool_workqueue *alloc_unbound_pwq(struct workqueue_struct *wq,

const struct workqueue_attrs *attrs)

{

struct worker_pool *pool;

struct pool_workqueue *pwq;

lockdep_assert_held(&wq_pool_mutex);

// (3.2.1.1) 如果對應 attrs 已經創建多對應的 unbound_pool,則使用已有的 unbound_pool

// 否則根據 attrs 創建新的 unbound_pool

pool = get_unbound_pool(attrs);

if (!pool)

return NULL;

pwq = kmem_cache_alloc_node(pwq_cache, GFP_KERNEL, pool->node);

if (!pwq) {

put_unbound_pool(pool);

return NULL;

}

init_pwq(pwq, wq, pool);

return pwq;

}

1.2 worker

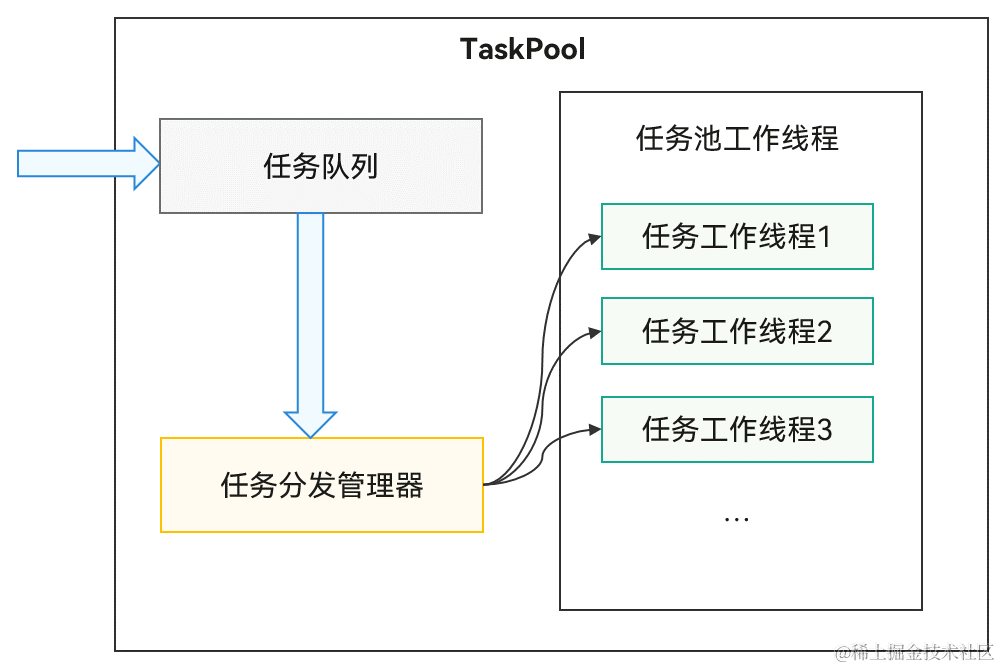

每個 worker 對應一個worker_thread()內核線程,一個 worker_pool 對應一個或者多個 worker。多個 worker 從同一個鏈表中 worker_pool->worklist 獲取 work 進行處理。

所以這其中有幾個重點:

worker 怎么處理 work;

worker_pool 怎么動態管理 worker 的數量;

1.2.1 worker 處理 work

處理 work 的過程主要在worker_thread()->process_one_work()中處理,我們具體看看代碼的實現過程。

kernel/workqueue.c:

worker_thread()->process_one_work()

static int worker_thread(void *__worker){struct worker *worker = __worker;struct worker_pool *pool = worker->pool;/* tell the scheduler that this is a workqueue worker */worker->task->flags |= PF_WQ_WORKER;woke_up:spin_lock_irq(&pool->lock);// (1) 是否 die/* am I supposed to die? */if (unlikely(worker->flags & WORKER_DIE)) {spin_unlock_irq(&pool->lock);WARN_ON_ONCE(!list_empty(&worker->entry));worker->task->flags &= ~PF_WQ_WORKER;set_task_comm(worker->task, "kworker/dying");ida_simple_remove(&pool->worker_ida, worker->id);worker_detach_from_pool(worker, pool);kfree(worker);return 0;}// (2) 脫離 idle 狀態// 被喚醒之前 worker 都是 idle 狀態worker_leave_idle(worker);recheck:// (3) 如果需要本 worker 繼續執行則繼續,否則進入 idle 狀態// need more worker 的條件: (pool->worklist != 0) && (pool->nr_running == 0)// worklist 上有 work 需要執行,并且現在沒有處于 running 的 work/* no more worker necessary? */if (!need_more_worker(pool))goto sleep;// (4) 如果 (pool->nr_idle == 0),則啟動創建更多的 worker// 說明 idle 隊列中已經沒有備用 worker 了,先創建 一些 worker 備用/* do we need to manage? */if (unlikely(!may_start_working(pool)) && manage_workers(worker))goto recheck;/* * ->scheduled list can only be filled while a worker is * preparing to process a work or actually processing it. * Make sure nobody diddled with it while I was sleeping. */WARN_ON_ONCE(!list_empty(&worker->scheduled));/* * Finish PREP stage. We're guaranteed to have at least one idle * worker or that someone else has already assumed the manager * role. This is where @worker starts participating in concurrency * management if applicable and concurrency management is restored * after being rebound. See rebind_workers() for details. */worker_clr_flags(worker, WORKER_PREP | WORKER_REBOUND);do {// (5) 如果 pool->worklist 不為空,從其中取出一個 work 進行處理struct work_struct *work =list_first_entry(&pool->worklist, struct work_struct, entry);if (likely(!(*work_data_bits(work) & WORK_STRUCT_LINKED))) {/* optimization path, not strictly necessary */// (6) 執行正常的 workprocess_one_work(worker, work);if (unlikely(!list_empty(&worker->scheduled)))process_scheduled_works(worker);} else {// (7) 執行系統特意 scheduled 給某個 worker 的 work// 普通的 work 是放在池子的公共 list 中的 pool->worklist// 只有一些特殊的 work 被特意派送給某個 worker 的 worker->scheduled// 包括:1、執行 flush_work 時插入的 barrier work;// 2、collision 時從其他 worker 推送到本 worker 的 workmove_linked_works(work, &worker->scheduled, NULL);process_scheduled_works(worker);}// (8) worker keep_working 的條件:// pool->worklist 不為空 && (pool->nr_running <= 1)} while (keep_working(pool));worker_set_flags(worker, WORKER_PREP);supposedsleep:// (9) worker 進入 idle 狀態/* * pool->lock is held and there's no work to process and no need to * manage, sleep. Workers are woken up only while holding * pool->lock or from local cpu, so setting the current state * before releasing pool->lock is enough to prevent losing any * event. */worker_enter_idle(worker);__set_current_state(TASK_INTERRUPTIBLE);spin_unlock_irq(&pool->lock);schedule();goto woke_up;}| →static void process_one_work(struct worker *worker, struct work_struct *work)__releases(&pool->lock)__acquires(&pool->lock){struct pool_workqueue *pwq = get_work_pwq(work);struct worker_pool *pool = worker->pool;bool cpu_intensive = pwq->wq->flags & WQ_CPU_INTENSIVE;int work_color;struct worker *collision;#ifdef CONFIG_LOCKDEP/* * It is permissible to free the struct work_struct from * inside the function that is called from it, this we need to * take into account for lockdep too. To avoid bogus "held * lock freed" warnings as well as problems when looking into * work->lockdep_map, make a copy and use that here. */struct lockdep_map lockdep_map;lockdep_copy_map(&lockdep_map, &work->lockdep_map);#endif/* ensure we're on the correct CPU */WARN_ON_ONCE(!(pool->flags & POOL_DISASSOCIATED) && raw_smp_processor_id() != pool->cpu);// (8.1) 如果 work 已經在 worker_pool 的其他 worker 上執行,// 將 work 放入對應 worker 的 scheduled 隊列中延后執行/* * A single work shouldn't be executed concurrently by * multiple workers on a single cpu. Check whether anyone is * already processing the work. If so, defer the work to the * currently executing one. */collision = find_worker_executing_work(pool, work);if (unlikely(collision)) {move_linked_works(work, &collision->scheduled, NULL);return;}// (8.2) 將 worker 加入 busy 隊列 pool->busy_hash/* claim and dequeue */debug_work_deactivate(work);hash_add(pool->busy_hash, &worker->hentry, (unsigned long)work);worker->current_work = work;worker->current_func = work->func;worker->current_pwq = pwq;work_color = get_work_color(work);list_del_init(&work->entry);// (8.3) 如果 work 所在的 wq 是 cpu 密集型的 WQ_CPU_INTENSIVE// 則當前 work 的執行脫離 worker_pool 的動態調度,成為一個獨立的線程/* * CPU intensive works don't participate in concurrency management. * They're the scheduler's responsibility. This takes @worker out * of concurrency management and the next code block will chain * execution of the pending work items. */if (unlikely(cpu_intensive))worker_set_flags(worker, WORKER_CPU_INTENSIVE);// (8.4) 在 UNBOUND 或者 CPU_INTENSIVE work 中判斷是否需要喚醒 idle worker// 普通 work 不會執行這個操作/* * Wake up another worker if necessary. The condition is always * false for normal per-cpu workers since nr_running would always * be >= 1 at this point. This is used to chain execution of the * pending work items for WORKER_NOT_RUNNING workers such as the * UNBOUND and CPU_INTENSIVE ones. */if (need_more_worker(pool))wake_up_worker(pool);/* * Record the last pool and clear PENDING which should be the last * update to @work. Also, do this inside @pool->lock so that * PENDING and queued state changes happen together while IRQ is * disabled. */set_work_pool_and_clear_pending(work, pool->id);spin_unlock_irq(&pool->lock);lock_map_acquire_read(&pwq->wq->lockdep_map);lock_map_acquire(&lockdep_map);trace_workqueue_execute_start(work);// (8.5) 執行 work 函數worker->current_func(work);/* * While we must be careful to not use "work" after this, the trace * point will only record its address. */trace_workqueue_execute_end(work);lock_map_release(&lockdep_map);lock_map_release(&pwq->wq->lockdep_map);if (unlikely(in_atomic() || lockdep_depth(current) > 0)) {pr_err("BUG: workqueue leaked lock or atomic: %s/0x%08x/%d\n" " last function: %pf\n", current->comm, preempt_count(), task_pid_nr(current), worker->current_func);debug_show_held_locks(current);dump_stack();}/* * The following prevents a kworker from hogging CPU on !PREEMPT * kernels, where a requeueing work item waiting for something to * happen could deadlock with stop_machine as such work item could * indefinitely requeue itself while all other CPUs are trapped in * stop_machine. At the same time, report a quiescent RCU state so * the same condition doesn't freeze RCU. */cond_resched_rcu_qs();spin_lock_irq(&pool->lock);/* clear cpu intensive status */if (unlikely(cpu_intensive))worker_clr_flags(worker, WORKER_CPU_INTENSIVE);/* we're done with it, release */hash_del(&worker->hentry);worker->current_work = NULL;worker->current_func = NULL;worker->current_pwq = NULL;worker->desc_valid = false;pwq_dec_nr_in_flight(pwq, work_color);

}

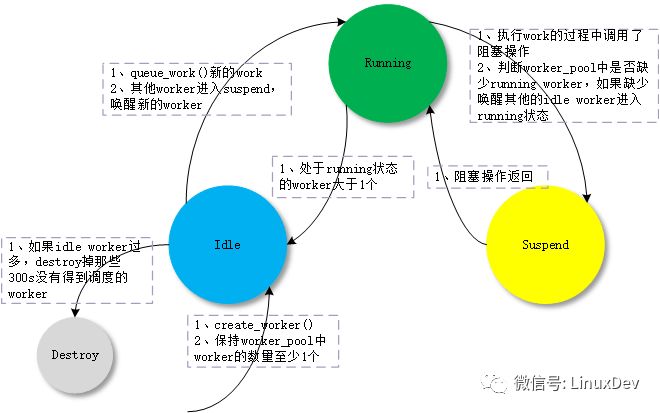

1.2.2 worker_pool 動態管理 worker

worker_pool 怎么來動態增減 worker,這部分的算法是 CMWQ 的核心。其思想如下:

worker_pool 中的 worker 有 3 種狀態:idle、running、suspend;

如果 worker_pool 中有 work 需要處理,保持至少一個 running worker 來處理;

running worker 在處理 work 的過程中進入了阻塞 suspend 狀態,為了保持其他 work 的執行,需要喚醒新的 idle worker 來處理 work;

如果有 work 需要執行且 running worker 大于 1 個,會讓多余的 running worker 進入 idle 狀態;

如果沒有 work 需要執行,會讓所有 worker 進入 idle 狀態;

如果創建的 worker 過多,destroy_worker 在 300s(IDLE_WORKER_TIMEOUT) 時間內沒有再次運行的 idle worker。

詳細代碼可以參考上節worker_thread()->process_one_work()的分析。

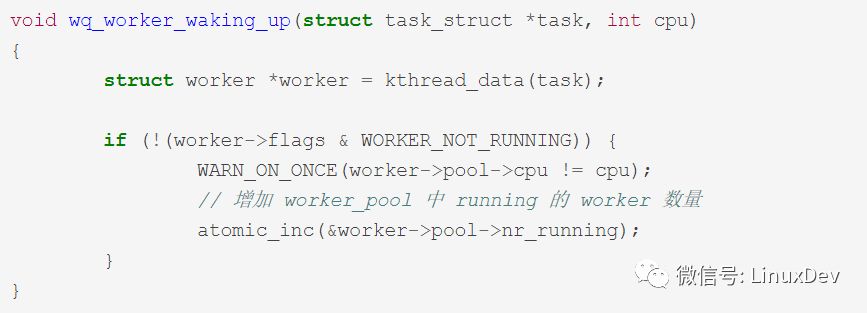

為了追蹤 worker 的 running 和 suspend 狀態,用來動態調整 worker 的數量。wq 使用在進程調度中加鉤子函數的技巧:

追蹤 worker 從 suspend 進入 running 狀態:ttwu_activate()->wq_worker_waking_up()

追蹤 worker 從 running 進入 suspend 狀態:__schedule()->wq_worker_sleeping()

struct task_struct *wq_worker_sleeping(struct task_struct *task, int cpu){struct worker *worker = kthread_data(task), *to_wakeup = NULL;struct worker_pool *pool;/* * Rescuers, which may not have all the fields set up like normal * workers, also reach here, let's not access anything before * checking NOT_RUNNING. */if (worker->flags & WORKER_NOT_RUNNING)return NULL;pool = worker->pool;/* this can only happen on the local cpu */if (WARN_ON_ONCE(cpu != raw_smp_processor_id() || pool->cpu != cpu))return NULL;/* * The counterpart of the following dec_and_test, implied mb, * worklist not empty test sequence is in insert_work(). * Please read comment there. * * NOT_RUNNING is clear. This means that we're bound to and * running on the local cpu w/ rq lock held and preemption * disabled, which in turn means that none else could be * manipulating idle_list, so dereferencing idle_list without pool * lock is safe. */// 減少 worker_pool 中 running 的 worker 數量// 如果 worklist 還有 work 需要處理,喚醒第一個 idle worker 進行處理if (atomic_dec_and_test(&pool->nr_running) && !list_empty(&pool->worklist))to_wakeup = first_idle_worker(pool);return to_wakeup ? to_wakeup->task : NULL;

}

這里 worker_pool 的調度思想是:如果有 work 需要處理,保持一個 running 狀態的 worker 處理,不多也不少。

但是這里有一個問題如果 work 是 CPU 密集型的,它雖然也沒有進入 suspend 狀態,但是會長時間的占用 CPU,讓后續的 work 阻塞太長時間。

為了解決這個問題,CMWQ 設計了 WQ_CPU_INTENSIVE,如果一個 wq 聲明自己是 CPU_INTENSIVE,則讓當前 worker 脫離動態調度,像是進入了 suspend 狀態,那么 CMWQ 會創建新的 worker,后續的 work 會得到執行。

kernel/workqueue.c:

worker_thread()->process_one_work()

static void process_one_work(struct worker *worker, struct work_struct *work)__releases(&pool->lock)__acquires(&pool->lock){bool cpu_intensive = pwq->wq->flags & WQ_CPU_INTENSIVE;// (1) 設置當前 worker 的 WORKER_CPU_INTENSIVE 標志// nr_running 會被減 1// 對 worker_pool 來說,當前 worker 相當于進入了 suspend 狀態/* * CPU intensive works don't participate in concurrency management. * They're the scheduler's responsibility. This takes @worker out * of concurrency management and the next code block will chain * execution of the pending work items. */if (unlikely(cpu_intensive))worker_set_flags(worker, WORKER_CPU_INTENSIVE);// (2) 接上一步,判斷是否需要喚醒新的 worker 來處理 work/* * Wake up another worker if necessary. The condition is always * false for normal per-cpu workers since nr_running would always * be >= 1 at this point. This is used to chain execution of the * pending work items for WORKER_NOT_RUNNING workers such as the * UNBOUND and CPU_INTENSIVE ones. */if (need_more_worker(pool))wake_up_worker(pool);// (3) 執行 workworker->current_func(work);// (4) 執行完,清理當前 worker 的 WORKER_CPU_INTENSIVE 標志// 當前 worker 重新進入 running 狀態/* clear cpu intensive status */if (unlikely(cpu_intensive))worker_clr_flags(worker, WORKER_CPU_INTENSIVE);}WORKER_NOT_RUNNING= WORKER_PREP | WORKER_CPU_INTENSIVE | WORKER_UNBOUND | WORKER_REBOUND,static inline void worker_set_flags(struct worker *worker, unsigned int flags){struct worker_pool *pool = worker->pool;WARN_ON_ONCE(worker->task != current);/* If transitioning into NOT_RUNNING, adjust nr_running. */if ((flags & WORKER_NOT_RUNNING) && !(worker->flags & WORKER_NOT_RUNNING)) {atomic_dec(&pool->nr_running);}worker->flags |= flags;}static inline void worker_clr_flags(struct worker *worker, unsigned int flags){struct worker_pool *pool = worker->pool;unsigned int oflags = worker->flags;WARN_ON_ONCE(worker->task != current);worker->flags &= ~flags;/* * If transitioning out of NOT_RUNNING, increment nr_running. Note * that the nested NOT_RUNNING is not a noop. NOT_RUNNING is mask * of multiple flags, not a single flag. */if ((flags & WORKER_NOT_RUNNING) && (oflags & WORKER_NOT_RUNNING))if (!(worker->flags & WORKER_NOT_RUNNING))atomic_inc(&pool->nr_running);

}

1.2.3 CPU hotplug 處理

從上幾節可以看到,系統會創建和 CPU 綁定的 normal worker_pool 和不綁定 CPU 的 unbound worker_pool,worker_pool 又會動態的創建 worker。

那么在 CPU hotplug 的時候,會怎么樣動態的處理 worker_pool 和 worker 呢?來看具體的代碼分析:

kernel/workqueue.c:

workqueue_cpu_up_callback()/workqueue_cpu_down_callback()

static int __init init_workqueues(void)

{cpu_notifier(workqueue_cpu_up_callback, CPU_PRI_WORKQUEUE_UP);hotcpu_notifier(workqueue_cpu_down_callback, CPU_PRI_WORKQUEUE_DOWN);}| →static int workqueue_cpu_down_callback(struct notifier_block *nfb, unsigned long action, void *hcpu){int cpu = (unsigned long)hcpu;struct work_struct unbind_work;struct workqueue_struct *wq;switch (action & ~CPU_TASKS_FROZEN) {case CPU_DOWN_PREPARE:/* unbinding per-cpu workers should happen on the local CPU */INIT_WORK_ONSTACK(&unbind_work, wq_unbind_fn);// (1) cpu down_prepare// 把和當前 cpu 綁定的 normal worker_pool 上的 worker 停工// 隨著當前 cpu 被 down 掉,這些 worker 會遷移到其他 cpu 上queue_work_on(cpu, system_highpri_wq, &unbind_work);// (2) unbound wq 對 cpu 變化的更新/* update NUMA affinity of unbound workqueues */mutex_lock(&wq_pool_mutex);list_for_each_entry(wq, &workqueues, list)wq_update_unbound_numa(wq, cpu, false);mutex_unlock(&wq_pool_mutex);/* wait for per-cpu unbinding to finish */flush_work(&unbind_work);destroy_work_on_stack(&unbind_work);break;}return NOTIFY_OK;}| →static int workqueue_cpu_up_callback(struct notifier_block *nfb,unsigned long action, void *hcpu){int CPU = (unsigned long)hcpu;struct worker_pool *pool;struct workqueue_struct *wq;int pi;switch (action & ~CPU_TASKS_FROZEN) {case CPU_UP_PREPARE:for_each_cpu_worker_pool(pool, CPU) {if (pool->nr_workers)continue;if (!create_worker(pool))return NOTIFY_BAD;}break;case CPU_DOWN_FAILED:case CPU_ONLINE:mutex_lock(&wq_pool_mutex);// (3) CPU upfor_each_pool(pool, pi) {mutex_lock(&pool->attach_mutex);// 如果和當前 CPU 綁定的 normal worker_pool 上,有 WORKER_UNBOUND 停工的 worker// 重新綁定 worker 到 worker_pool// 讓這些 worker 開工,并綁定到當前 CPUif (pool->CPU == CPU)rebind_workers(pool);else if (pool->CPU < 0)restore_unbound_workers_cpumask(pool, CPU);mutex_unlock(&pool->attach_mutex);}/* update NUMA affinity of unbound workqueues */list_for_each_entry(wq, &workqueues, list)wq_update_unbound_numa(wq, CPU, true);mutex_unlock(&wq_pool_mutex);break;}return NOTIFY_OK;

}

1.3 workqueue

workqueue 就是存放一組 work 的集合,基本可以分為兩類:一類系統創建的 workqueue,一類是用戶自己創建的 workqueue。

不論是系統還是用戶的 workqueue,如果沒有指定 WQ_UNBOUND,默認都是和 normal worker_pool 綁定。

1.3.1 系統 workqueue

系統在初始化時創建了一批默認的 workqueue:system_wq、system_highpri_wq、system_long_wq、system_unbound_wq、system_freezable_wq、system_power_efficient_wq、system_freezable_power_efficient_wq。

像 system_wq,就是 schedule_work() 默認使用的。

kernel/workqueue.c:

init_workqueues()

static int __init init_workqueues(void){system_wq = alloc_workqueue("events", 0, 0);system_highpri_wq = alloc_workqueue("events_highpri", WQ_HIGHPRI, 0);system_long_wq = alloc_workqueue("events_long", 0, 0);system_unbound_wq = alloc_workqueue("events_unbound", WQ_UNBOUND, WQ_UNBOUND_MAX_ACTIVE);system_freezable_wq = alloc_workqueue("events_freezable", WQ_FREEZABLE, 0);system_power_efficient_wq = alloc_workqueue("events_power_efficient", WQ_POWER_EFFICIENT, 0);system_freezable_power_efficient_wq = alloc_workqueue("events_freezable_power_efficient", WQ_FREEZABLE | WQ_POWER_EFFICIENT, 0);}

1.3.2 workqueue 創建

詳細過程見上幾節的代碼分析:alloc_workqueue() -> __alloc_workqueue_key() -> alloc_and_link_pwqs()。

1.3.3 flush_workqueue()

這一部分的邏輯,wq->work_color、wq->flush_color 換來換去的邏輯實在看的頭暈。看不懂暫時不想看,放著以后看吧,或者有誰看懂了教我一下。:)

1.4 pool_workqueue

pool_workqueue 只是一個中介角色。

詳細過程見上幾節的代碼分析:alloc_workqueue() -> __alloc_workqueue_key() -> alloc_and_link_pwqs()。

1.5 work

描述一份待執行的工作。

1.5.1 queue_work()

將 work 壓入到 workqueue 當中。

kernel/workqueue.c:

queue_work() -> queue_work_on() -> __queue_work()

static void __queue_work(int cpu, struct workqueue_struct *wq, struct work_struct *work){struct pool_workqueue *pwq;struct worker_pool *last_pool;struct list_head *worklist;unsigned int work_flags;unsigned int req_cpu = cpu;/* * While a work item is PENDING && off queue, a task trying to * steal the PENDING will busy-loop waiting for it to either get * queued or lose PENDING. Grabbing PENDING and queueing should * happen with IRQ disabled. */WARN_ON_ONCE(!irqs_disabled());debug_work_activate(work);/* if draining, only works from the same workqueue are allowed */if (unlikely(wq->flags & __WQ_DRAINING) && WARN_ON_ONCE(!is_chained_work(wq)))return;retry:// (1) 如果沒有指定 cpu,則使用當前 cpuif (req_cpu == WORK_CPU_UNBOUND)cpu = raw_smp_processor_id();/* pwq which will be used unless @work is executing elsewhere */if (!(wq->flags & WQ_UNBOUND))// (2) 對于 normal wq,使用當前 cpu 對應的 normal worker_poolpwq = per_cpu_ptr(wq->cpu_pwqs, cpu);else// (3) 對于 unbound wq,使用當前 cpu 對應 node 的 worker_poolpwq = unbound_pwq_by_node(wq, cpu_to_node(cpu));// (4) 如果 work 在其他 worker 上正在被執行,把 work 壓到對應的 worker 上去// 避免 work 出現重入的問題/* * If @work was previously on a different pool, it might still be * running there, in which case the work needs to be queued on that * pool to guarantee non-reentrancy. */last_pool = get_work_pool(work);if (last_pool && last_pool != pwq->pool) {struct worker *worker;spin_lock(&last_pool->lock);worker = find_worker_executing_work(last_pool, work);if (worker && worker->current_pwq->wq == wq) {pwq = worker->current_pwq;} else {/* meh... not running there, queue here */spin_unlock(&last_pool->lock);spin_lock(&pwq->pool->lock);}} else {spin_lock(&pwq->pool->lock);}/* * pwq is determined and locked. For unbound pools, we could have * raced with pwq release and it could already be dead. If its * refcnt is zero, repeat pwq selection. Note that pwqs never die * without another pwq replacing it in the numa_pwq_tbl or while * work items are executing on it, so the retrying is guaranteed to * make forward-progress. */if (unlikely(!pwq->refcnt)) {if (wq->flags & WQ_UNBOUND) {spin_unlock(&pwq->pool->lock);cpu_relax();goto retry;}/* oops */WARN_ONCE(true, "workqueue: per-cpu pwq for %s on cpu%d has 0 refcnt", wq->name, cpu);}/* pwq determined, queue */trace_workqueue_queue_work(req_cpu, pwq, work);if (WARN_ON(!list_empty(&work->entry))) {spin_unlock(&pwq->pool->lock);return;}pwq->nr_in_flight[pwq->work_color]++;work_flags = work_color_to_flags(pwq->work_color);// (5) 如果還沒有達到 max_active,將 work 掛載到 pool->worklistif (likely(pwq->nr_active < pwq->max_active)) {trace_workqueue_activate_work(work);pwq->nr_active++;worklist = &pwq->pool->worklist;// 否則,將 work 掛載到臨時隊列 pwq->delayed_works} else {work_flags |= WORK_STRUCT_DELAYED;worklist = &pwq->delayed_works;}// (6) 將 work 壓入 worklist 當中insert_work(pwq, work, worklist, work_flags);spin_unlock(&pwq->pool->lock);

}

1.5.2flush_work()

flush 某個 work,確保 work 執行完成。

怎么判斷異步的 work 已經執行完成?這里面使用了一個技巧:在目標 work 的后面插入一個新的 work wq_barrier,如果 wq_barrier 執行完成,那么目標 work 肯定已經執行完成。

kernel/workqueue.c:

queue_work()->queue_work_on()->__queue_work()

/** * flush_work - wait for a work to finish executing the last queueing instance * @work: the work to flush * * Wait until @work has finished execution. @work is guaranteed to be idle * on return if it hasn't been requeued since flush started. * * Return: * %true if flush_work() waited for the work to finish execution, * %false if it was already idle. */bool flush_work(struct work_struct *work){struct wq_barrier barr;lock_map_acquire(&work->lockdep_map);lock_map_release(&work->lockdep_map);if (start_flush_work(work, &barr)) {// 等待 barr work 執行完成的信號wait_for_completion(&barr.done);destroy_work_on_stack(&barr.work);return true;} else {return false;}}| →static bool start_flush_work(struct work_struct *work, struct wq_barrier *barr){struct worker *worker = NULL;struct worker_pool *pool;struct pool_workqueue *pwq;might_sleep();// (1) 如果 work 所在 worker_pool 為 NULL,說明 work 已經執行完local_irq_disable();pool = get_work_pool(work);if (!pool) {local_irq_enable();return false;}spin_lock(&pool->lock);/* see the comment in try_to_grab_pending() with the same code */pwq = get_work_pwq(work);if (pwq) {// (2) 如果 work 所在 pwq 指向的 worker_pool 不等于上一步得到的 worker_pool,說明 work 已經執行完if (unlikely(pwq->pool != pool))goto already_gone;} else {// (3) 如果 work 所在 pwq 為 NULL,并且也沒有在當前執行的 work 中,說明 work 已經執行完worker = find_worker_executing_work(pool, work);if (!worker)goto already_gone;pwq = worker->current_pwq;}// (4) 如果 work 沒有執行完,向 work 的后面插入 barr workinsert_wq_barrier(pwq, barr, work, worker);spin_unlock_irq(&pool->lock);/* * If @max_active is 1 or rescuer is in use, flushing another work * item on the same workqueue may lead to deadlock. Make sure the * flusher is not running on the same workqueue by verifying write * access. */if (pwq->wq->saved_max_active == 1 || pwq->wq->rescuer)lock_map_acquire(&pwq->wq->lockdep_map);elselock_map_acquire_read(&pwq->wq->lockdep_map);lock_map_release(&pwq->wq->lockdep_map);return true;already_gone:spin_unlock_irq(&pool->lock);return false;

}

|| →

static void insert_wq_barrier(struct pool_workqueue *pwq, struct wq_barrier *barr, struct work_struct *target, struct worker *worker){struct list_head *head;unsigned int linked = 0;/* * debugobject calls are safe here even with pool->lock locked * as we know for sure that this will not trigger any of the * checks and call back into the fixup functions where we * might deadlock. */// (4.1) barr work 的執行函數 wq_barrier_func()INIT_WORK_ONSTACK(&barr->work, wq_barrier_func);__set_bit(WORK_STRUCT_PENDING_BIT, work_data_bits(&barr->work));init_completion(&barr->done);/* * If @target is currently being executed, schedule the * barrier to the worker; otherwise, put it after @target. */// (4.2) 如果 work 當前在 worker 中執行,則 barr work 插入 scheduled 隊列if (worker)head = worker->scheduled.next;// 否則,則 barr work 插入正常的 worklist 隊列中,插入位置在目標 work 后面// 并且置上 WORK_STRUCT_LINKED 標志else {unsigned long *bits = work_data_bits(target);head = target->entry.next;/* there can already be other linked works, inherit and set */linked = *bits & WORK_STRUCT_LINKED;__set_bit(WORK_STRUCT_LINKED_BIT, bits);}debug_work_activate(&barr->work);insert_work(pwq, &barr->work, head, work_color_to_flags(WORK_NO_COLOR) | linked);

}

||| →

static void wq_barrier_func(struct work_struct *work){struct wq_barrier *barr = container_of(work, struct wq_barrier, work);// (4.1.1) barr work 執行完成,發出 complete 信號。complete(&barr->done);

}

2.Workqueue 對外接口函數

CMWQ 實現的 workqueue 機制,被包裝成相應的對外接口函數。

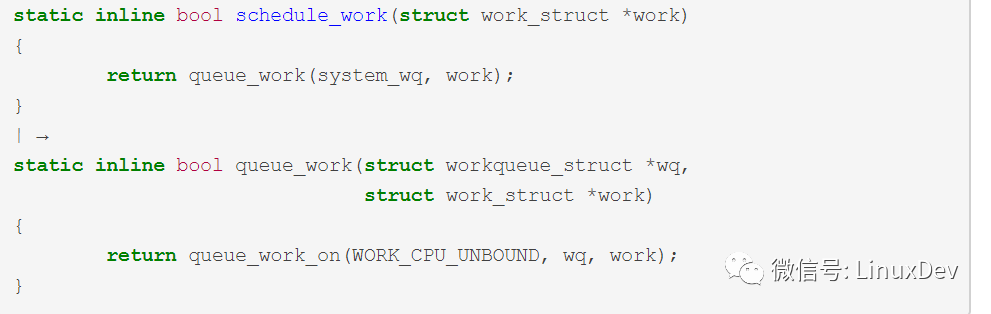

2.1schedule_work()

把 work 壓入系統默認 wq system_wq,WORK_CPU_UNBOUND 指定 worker 為當前 CPU 綁定的 normal worker_pool 創建的 worker。

kernel/workqueue.c:

schedule_work()->queue_work_on()->__queue_work()

2.2schedule_work_on()

在schedule_work()基礎上,可以指定 work 運行的 CPU。

kernel/workqueue.c:

schedule_work_on()->queue_work_on()->__queue_work()

2.3schedule_delayed_work()

啟動一個 timer,在 timer 定時到了以后調用delayed_work_timer_fn()把 work 壓入系統默認 wq system_wq。

kernel/workqueue.c:

schedule_work_on()->queue_work_on()->__queue_work()

static inline bool schedule_delayed_work(struct delayed_work *dwork, unsigned long delay)

{return queue_delayed_work(system_wq, dwork, delay);

}

| →

static inline bool queue_delayed_work(struct workqueue_struct *wq, struct delayed_work *dwork, unsigned long delay)

{return queue_delayed_work_on(WORK_CPU_UNBOUND, wq, dwork, delay);}|| →bool queue_delayed_work_on(int cpu, struct workqueue_struct *wq, struct delayed_work *dwork, unsigned long delay){struct work_struct *work = &dwork->work;bool ret = false;unsigned long flags;/* read the comment in __queue_work() */local_irq_save(flags);if (!test_and_set_bit(WORK_STRUCT_PENDING_BIT, work_data_bits(work))) {__queue_delayed_work(cpu, wq, dwork, delay);ret = true;}local_irq_restore(flags);return ret;

}

||| →

static void __queue_delayed_work(int cpu, struct workqueue_struct *wq,struct delayed_work *dwork, unsigned long delay){struct timer_list *timer = &dwork->timer;struct work_struct *work = &dwork->work;WARN_ON_ONCE(timer->function != delayed_work_timer_fn || timer->data != (unsigned long)dwork);WARN_ON_ONCE(timer_pending(timer));WARN_ON_ONCE(!list_empty(&work->entry));/* * If @delay is 0, queue @dwork->work immediately. This is for * both optimization and correctness. The earliest @timer can * expire is on the closest next tick and delayed_work users depend * on that there's no such delay when @delay is 0. */if (!delay) {__queue_work(cpu, wq, &dwork->work);return;}timer_stats_timer_set_start_info(&dwork->timer);dwork->wq = wq;dwork->cpu = cpu;timer->expires = jiffies + delay;if (unlikely(cpu != WORK_CPU_UNBOUND))add_timer_on(timer, cpu);elseadd_timer(timer);

}

|||| →

void delayed_work_timer_fn(unsigned long __data)

{struct delayed_work *dwork = (struct delayed_work *)__data;/* should have been called from irqsafe timer with irq already off */__queue_work(dwork->cpu, dwork->wq, &dwork->work);

}

Documentation/workqueue.txt

-

cpu

+關注

關注

68文章

10829瀏覽量

211193 -

Linux

+關注

關注

87文章

11232瀏覽量

208950

原文標題:魅族內核團隊: Linux Workqueue

文章出處:【微信號:LinuxDev,微信公眾號:Linux閱碼場】歡迎添加關注!文章轉載請注明出處。

發布評論請先 登錄

相關推薦

鴻蒙原生應用開發-ArkTS語言基礎類庫多線程TaskPool和Worker的對比(二)

鴻蒙原生應用開發-ArkTS語言基礎類庫多線程TaskPool和Worker的對比(三)

Linux內核創建新進程的過程分析

FreeRTOS的任務創建過程

為何需要CMWQ?CMWQ如何解決問題的呢?

Bootloader是什么Bootloader的介紹和過程詳細解

如何用Worker pool解決異步任務的問題

公用池化包Commons Pool 2

鴻蒙APP開發:【ArkTS類庫多線程】TaskPool和Worker的對比(2)

normal worker_pool詳細的創建過程代碼分析

normal worker_pool詳細的創建過程代碼分析

評論